For years, platform operators faced a paradox at the heart of COPPA compliance: you needed to collect personal information to determine whether a user was a child, but collecting that information from someone who turned out to be a child could itself violate COPPA. The result was regulatory paralysis. Many operators simply avoided age verification altogether, relying on self-declared birthdates that everyone knew were ineffective.

On February 25, 2026, the Federal Trade Commission resolved this catch-22 with a policy statement that fundamentally changes the compliance calculus. The Commission announced it will not bring enforcement actions against operators who collect personal information solely for the purpose of age verification, provided they meet a specific set of conditions. This isn’t a rule change — it’s an exercise of enforcement discretion — but for practical purposes, it creates a safe harbor that removes the biggest obstacle to deploying real age verification on general-audience platforms.

Here’s what it means, what it requires, and where the gaps are.

The Core Problem the FTC Is Solving

COPPA requires operators of websites and online services directed at children — or that have actual knowledge they’re collecting data from children under 13 — to obtain verifiable parental consent before collecting personal information. The rule was designed to protect children, but it created a perverse incentive: if you don’t check ages, you don’t have “actual knowledge” of child users, and you can argue COPPA doesn’t apply.

This loophole has been widely criticized. The FTC, Congress, child safety advocates, and even some platform operators have acknowledged that it effectively punishes companies that try to do the right thing. If you deploy age verification, you might discover child users on your platform — triggering COPPA obligations you wouldn’t have if you stayed willfully ignorant.

The February 2026 policy statement addresses this head-on. By signaling that the Commission won’t prosecute the act of collecting age verification data itself, it removes the penalty for looking.

What the Safe Harbor Requires

The policy statement isn’t a blank check. To qualify for enforcement discretion, operators must satisfy six conditions:

1. Purpose limitation. Personal information collected for age verification must be used solely to determine a user’s age. You cannot repurpose selfie images, ID scans, or any other data collected during verification for marketing, profiling, analytics, or any other purpose.

2. Prompt deletion. Age verification data must be deleted promptly after it is no longer needed to fulfill the age determination purpose. The FTC doesn’t define “promptly” with a specific timeframe, but the intent is clear: don’t warehouse biometric data or identity documents after verification is complete.

3. Limited third-party disclosure. If you share age verification data with third-party vendors (as most operators will, since few build verification in-house), you must take reasonable steps to ensure those third parties can maintain the confidentiality, security, and integrity of the data. This includes obtaining written assurances from those vendors.

4. Clear notice. Operators must provide clear notice to parents and users about what personal information is collected for age verification, how it’s used, and how it’s protected.

5. Reasonable security. Standard data security obligations apply to age verification data — encryption, access controls, and other measures proportionate to the sensitivity of the data being collected.

6. Accuracy. Operators must take reasonable steps to ensure the age verification method they use is likely to produce accurate results. This rules out purely theatrical measures like checkbox age gates, but the FTC hasn’t specified minimum accuracy thresholds.

What the Policy Doesn’t Cover

Several important limitations are worth noting.

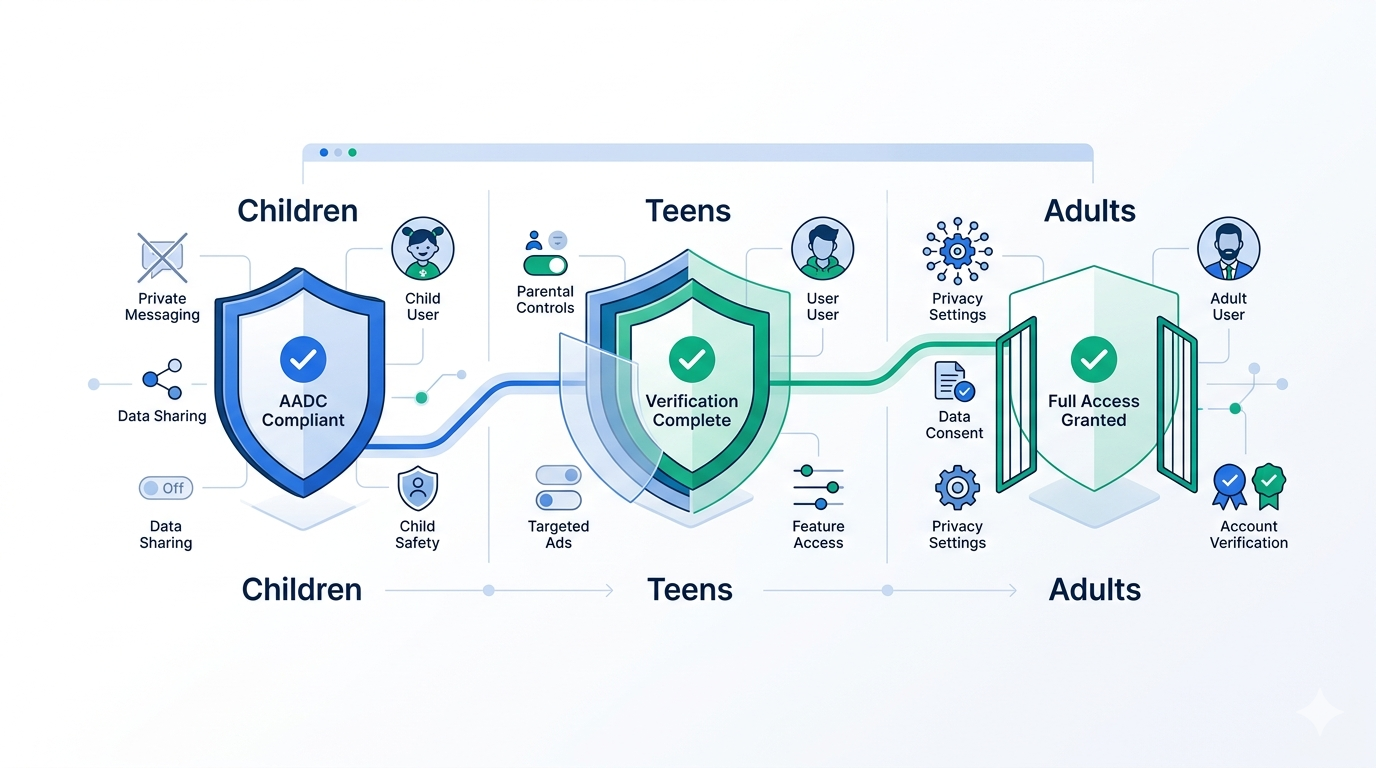

Child-directed sites are excluded. The safe harbor applies only to general-audience and mixed-audience platforms. If your site is primarily directed at children under 13, the FTC’s longstanding position is that you should assume your users are children and comply with COPPA accordingly. You don’t get to use age verification as an escape hatch.

It doesn’t cover data retention for returning users. The policy statement explicitly does not provide safe harbor protection for retaining age verification data to help operators prevent future attempts to circumvent their age policies. If you want to store a token or credential so verified users don’t have to re-verify on every visit, that’s a separate compliance question.

It doesn’t cover training data. The FTC specifically notes that the policy statement does not contemplate the collection or use of children’s information to build datasets used to train age estimation algorithms. If your verification vendor is training ML models on the biometric data it collects, that’s outside the safe harbor.

It’s temporary. The Commission has indicated it intends to initiate a formal rulemaking to address age verification under COPPA. This policy statement remains effective until final rule amendments are published — which could be months or years. But the direction of travel is clear.

Why This Matters Now

The timing isn’t coincidental. The FTC’s policy statement arrives in a regulatory environment where age verification is no longer optional:

- Over 25 US states have enacted age verification laws, with at least 18 new bills introduced in 2026 alone

- The UK’s Online Safety Act enforcement is in full swing, with Ofcom issuing fines and conducting 80+ investigations

- France has banned social media for under-15s, effective September 2026

- Germany has introduced payment-blocking enforcement for non-compliant platforms

- The EU Digital Identity Wallet must be available in all 27 member states by December 2026

The FTC’s move signals that the US federal government is aligning with this global trend. Rather than continuing to treat age verification data collection as a COPPA liability, the Commission is recognizing it as a necessary tool for child protection — provided it’s done responsibly.

The Architecture Question

The six conditions in the FTC’s safe harbor aren’t just legal requirements. They’re an architectural specification. And they strongly favor a specific design pattern: on-device processing with minimal data transmission.

Consider the requirements through the lens of system design:

Purpose limitation and prompt deletion are trivially satisfied when biometric processing happens on the user’s device and only a binary age result (over/under threshold) leaves the client. There’s no server-side biometric data to repurpose or retain because it was never transmitted.

Limited third-party disclosure becomes a non-issue when no biometric data is shared with third parties at all. If your age estimation model runs in the browser via WebAssembly, the only data that reaches your servers — or any third party — is a cryptographically signed assertion that the user met the age threshold.

Reasonable security is easier to achieve when you’re protecting a boolean flag rather than a facial image or ID scan. The attack surface collapses dramatically.

Accuracy requirements are met when your model can demonstrate performance against recognized benchmarks — something that’s independently measurable regardless of where the model runs.

This is exactly the architecture Xident was built around. Our on-device age estimation model processes facial features entirely in the browser using ONNX Runtime and WebAssembly. No biometric data leaves the user’s device. The only information transmitted to your servers is a signed verification token confirming the user meets the required age threshold. When the user returns, a passkey-bound credential eliminates the need for re-verification entirely.

This approach doesn’t just comply with the FTC’s conditions — it makes most of them irrelevant by eliminating the data flows they’re designed to constrain.

What Platform Operators Should Do

If you operate a general-audience or mixed-audience platform with US users, the FTC has removed your biggest excuse for not implementing age verification. Here’s a practical roadmap:

Audit Your Current Approach

If you’re relying on self-declared birthdates or checkbox age gates, recognize that these are increasingly untenable — both legally and reputationally. The FTC’s accuracy requirement means your verification method must be “likely to produce accurate results.” Self-declaration doesn’t meet that bar.

Evaluate Vendor Architecture

Not all age verification vendors are created equal. When evaluating solutions, map each vendor’s data flows against the FTC’s six conditions:

| Condition | Cloud-Based Verification | On-Device Verification |

|---|---|---|

| Purpose limitation | Requires policy enforcement | Enforced by architecture |

| Prompt deletion | Requires active data management | No biometric data to delete |

| Third-party disclosure | Vendor receives biometric data | No biometric data transmitted |

| Clear notice | Required | Required (but simpler) |

| Security | Must protect biometric data at rest and in transit | Only protects verification tokens |

| Accuracy | Depends on model | Depends on model |

Plan for Returning Users

The FTC’s safe harbor doesn’t cover credential retention for returning users, but that doesn’t mean you can’t implement it — you just need a separate legal basis. Token-based systems that store a passkey-bound age credential on the user’s device avoid the problem entirely: there’s nothing on your servers to retain.

Document Your Compliance

The policy statement creates enforcement discretion, not a legal right. Document your age verification implementation, data flows, deletion procedures, and vendor agreements. If the FTC ever does investigate, you want a clear paper trail showing you met all six conditions.

Looking Ahead

The FTC has signaled a formal COPPA rulemaking is coming. When it arrives, the conditions in this policy statement will likely form the baseline for permanent rules. Operators who align their systems now will be ahead of the curve.

More broadly, the policy statement reflects a global regulatory consensus that’s been building for two years: age verification is no longer optional, privacy must be preserved in the verification process, and the burden of proof is shifting from “why should we verify” to “why haven’t we verified yet.”

The catch-22 that paralyzed COPPA compliance for a decade is resolved. The question now is execution.

Xident’s on-device age verification is designed to meet and exceed the FTC’s safe harbor conditions by default. Our architecture processes biometric data entirely on the user’s device, transmitting only a signed age verification token — making purpose limitation, prompt deletion, and data minimisation architectural guarantees rather than policy commitments. Get started in 5 minutes or explore our pricing.