If you’re building an AI chatbot or generative AI product, age verification just moved from “nice to have” to “ship-blocking legal requirement.” The regulatory window is closing fast, and the penalties for getting it wrong are no longer hypothetical.

In February 2026, the UK’s Information Commissioner’s Office fined Reddit £14.47 million for failing to protect children’s data — the ICO’s largest children’s-privacy penalty to date. The core failure: Reddit relied on self-declaration for age assurance, which the ICO concluded was trivially easy to bypass. That same enforcement logic is now being applied to AI platforms, and the stakes are even higher.

Here’s what’s happening, why it matters for AI builders, and what you need to do about it.

The Regulatory Wave Hitting AI Chatbots

Three major regulatory tracks are converging on AI chatbot platforms simultaneously:

The US GUARD Act

The Guidelines for User Age-verification and Responsible Dialogue (GUARD) Act, introduced as S.3062 in the 119th Congress, would impose the most prescriptive age verification requirements ever applied to AI chatbots.

The bill’s core mandates are significant. Every covered AI companion must require user accounts and verify age at account creation — and periodically re-verify after that. If age verification determines a user is a minor, the platform must prohibit access entirely. No graduated access, no parental consent bypass — full prohibition.

What counts as “reasonable age verification” is explicitly defined in the negative: requiring a user to confirm they’re not a minor, or asking for a birthdate, is explicitly insufficient. IP-based inference and shared device indicators don’t qualify either. You need government-issued identification or equivalent proven methods.

Violations carry civil and criminal penalties up to $100,000 per incident. As of late March 2026, the bill is advancing through House committee votes alongside companion social media age verification legislation.

Australia’s A$49.5 Million Enforcement Regime

On March 9, 2026, six new age verification codes took effect in Australia. These aren’t guidelines — they’re enforceable codes backed by the eSafety Commissioner’s explicit promise to use “the full range of enforcement powers” against non-compliant services.

The codes require any AI chatbot or generative AI service capable of producing sexually explicit content, high-impact violence, or self-harm material to verify users are 18+ — either at login or before generating restricted content. The penalty ceiling is A$49.5 million (roughly US$34.5 million) per breach.

How prepared is the industry? A review of 50 leading text-based AI chat services operating in Australia found that only nine had introduced or announced age assurance measures. Eleven platforms chose to apply blanket content filters or planned to block Australian users entirely — an approach that sacrifices market access instead of solving the compliance problem.

The eSafety Commissioner has also signaled that enforcement may extend to gatekeeper platforms — search engines and app stores — that serve as primary access points for non-compliant AI services.

The UK Online Safety Act and EU Alignment

The UK’s Online Safety Act already requires “highly effective” age assurance for platforms accessible to children. The Reddit fine demonstrates that Ofcom and the ICO are treating inadequate age verification as a primary enforcement vector, not a secondary concern.

The European Data Protection Board has weighed in with a critical constraint: age assurance must be “the least intrusive possible,” and children’s personal data must receive the highest protection. This creates a design tension — verification must be effective enough to satisfy regulators but privacy-preserving enough to satisfy data protection authorities. Self-declaration fails on both counts.

California’s SB 243, signed in October 2025, adds state-level requirements specifically targeting AI companion chatbots, including periodic bot disclaimers and break alerts for minors.

Why Self-Declaration Is Dead

The Reddit fine crystallized something the industry needed to hear: self-declaration is not age verification. The ICO found that “a large number of under-13s had been able to access the service without any checks” and concluded that self-declaration “presents risks to children as it is easy to bypass.”

This finding has immediate implications for every AI platform currently using birthdate gates, checkbox confirmations, or terms-of-service acceptance as their “age verification” mechanism. Regulators across jurisdictions are converging on the same conclusion: if a 12-year-old can bypass your age check by typing “1990” as their birth year, you don’t have age verification — you have a liability.

The shift is from intent-based compliance (“we asked them their age”) to outcome-based compliance (“we demonstrably prevented minors from accessing restricted content”). This is the standard that Reddit failed and that the GUARD Act codifies into law.

What Effective Age Assurance Looks Like for AI Platforms

Building age assurance into an AI chatbot differs from traditional e-commerce or content platform verification. AI platforms face unique challenges: conversations are dynamic, content restrictions depend on what the model generates (not just what’s hosted), and the interaction model is continuous rather than transactional.

Verification at the Gate, Not After the Fact

The GUARD Act’s requirement to verify age at account creation — before any interaction occurs — maps cleanly to the right architectural pattern. Age verification should happen once at onboarding, with the verified age status persisting as a session-level or account-level attribute.

This is where token-based returning user patterns matter. A user who has verified once shouldn’t need to re-verify on every session. A privacy-preserving age token — proving “this user is 18+” without revealing their identity or birthdate — creates the right balance between compliance and user experience.

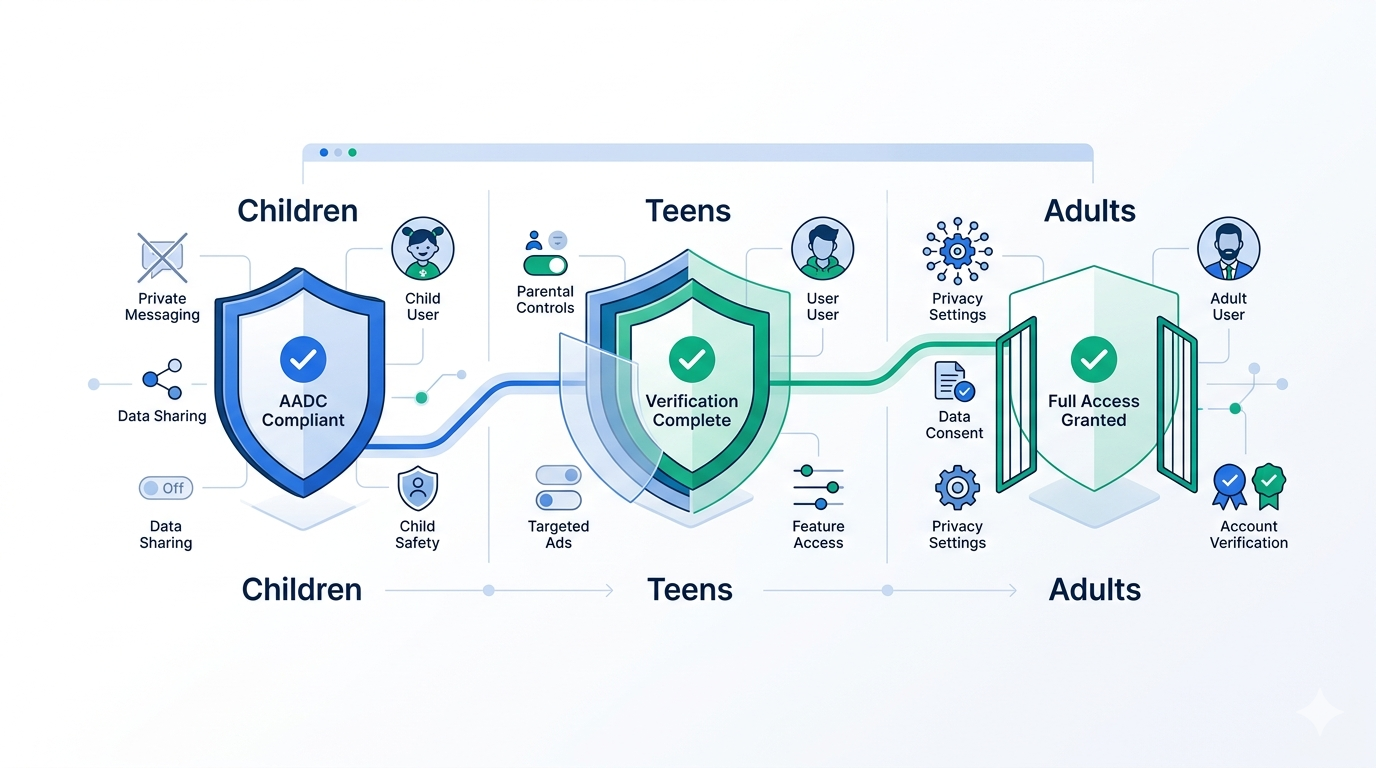

Tiered Response Based on Verified Age

Not every AI platform needs to block minors entirely. Platforms that don’t fall under the GUARD Act’s AI companion definition may still need to implement age-appropriate content controls. The pattern:

- Under 13: Block or require verifiable parental consent (COPPA requirements still apply)

- 13–17: Allow access with enhanced safety settings, content filters, and interaction limits

- 18+: Full access to unrestricted content generation

This maps to OpenAI’s current approach, which uses signals to predict whether an account belongs to someone under 18 and enables extra safety settings when detected. But signal-based prediction isn’t verification — it’s a heuristic. Regulators are increasingly demanding the former.

Privacy-Preserving Methods

The EDPB’s “least intrusive” standard isn’t optional — it’s the regulatory baseline for any platform processing EU user data. Effective approaches include:

Biometric age estimation uses facial analysis to estimate age range without storing biometric data or verifying identity. It answers “is this person likely over 18?” without learning who they are. Accuracy has improved significantly, with leading providers achieving over 98% accuracy for the 18+ threshold.

Zero-knowledge age proofs allow a user to cryptographically prove they meet an age threshold without revealing their actual age or identity. This is the gold standard for privacy preservation but requires credential infrastructure that’s still maturing.

Reusable age credentials — the “verify once, prove everywhere” model — let users verify their age once with a trusted provider and present a privacy-preserving token to multiple platforms. This eliminates redundant verification and reduces the surface area for data collection.

Device-level age signals from Apple and Google’s declared age range APIs provide OS-level age attestation without requiring the app to collect identity documents directly. These are increasingly available but coverage varies by device and OS version.

The Engineering Tradeoffs

For platform builders evaluating age verification integration, the key tradeoffs are:

Friction vs. compliance. More rigorous verification methods (document-based, biometric) have higher drop-off rates but satisfy stricter regulatory standards. Lighter methods (device signals, reusable tokens) have lower friction but may not meet the GUARD Act’s explicit rejection of non-documentary approaches. The right answer depends on your regulatory exposure.

Build vs. integrate. Building age verification in-house means maintaining document processing, liveness detection, biometric models, and compliance with data handling requirements across jurisdictions. Integrating a purpose-built API handles all of this behind a single endpoint. For most AI startups, the build option is a distraction from their core product.

Verification timing. Verifying at account creation is the simplest pattern and satisfies the GUARD Act. But some platforms may need to verify at content-generation time — especially if the same platform serves both general and restricted content. The architectural choice here affects your entire session management layer.

Data retention. Age verification inherently involves sensitive data. The regulatory consensus is clear: process what you need, delete what you’ve processed, and store only the minimum assertion (e.g., “user is 18+, verified on [date]”). Never store the identity documents or biometric data used for verification.

What This Means for the Market

The AI chatbot age verification mandate is creating a market shift similar to what happened with e-commerce payment compliance (PCI-DSS) and social media content moderation. What starts as a regulatory burden becomes a competitive differentiator.

Platforms that implement robust age verification early will have three advantages: they’ll avoid the headline fines that are now clearly coming, they’ll maintain access to regulated markets (Australia is the first to enforce, not the last), and they’ll build user trust at a moment when AI safety concerns are mainstream.

The platforms that delay — hoping for regulatory rollback or enforcement leniency — are making the same bet Reddit made. The ICO’s £14.47 million answer to that bet should be instructive.

How Xident Fits

Xident was built for exactly this problem. Our API provides age verification that satisfies the strictest regulatory standards — Ofcom, KJM, ARCOM, eSafety Commissioner — while preserving user privacy through token-based returning user flows and on-device processing.

For AI chatbot platforms specifically, the integration pattern is straightforward:

- Trigger verification at account creation using Xident’s SDK or API

- Receive a privacy-preserving age token that persists across sessions

- Enforce age-based access controls in your application layer based on the verified age threshold

- Handle re-verification on the schedule your regulatory environment requires

No identity documents stored on your infrastructure. No biometric data retained. A single API call that returns a verified age assertion, and your compliance obligation is met.

The regulatory clock is running. The GUARD Act is advancing through Congress. Australia is enforcing. The UK is fining. The cost of inaction is measurable in eight figures.

If you’re building an AI chatbot and haven’t integrated age verification yet, start here.