The creator economy crossed $250 billion globally in 2026. Over 207 million active content creators sell subscriptions, courses, digital products, and exclusive content through platforms like OnlyFans, Patreon, Substack, Whop, and Passes. And regulators have finally caught up.

What used to be a problem only for adult content platforms is now everyone’s problem. The EU’s Digital Services Act requires platforms to verify ages for restricted content. The UK Online Safety Act mandates “highly effective” age assurance. In the US, half of states now enforce age verification laws — and they’re expanding scope from pornography to any platform where minors could access harmful content. Substack started locking out creators who hadn’t completed government ID verification. Patreon overhauled its policies around minor safety and adult content. OnlyFans rolled out mandatory liveness detection and periodic re-verification.

If you run a creator platform, the question isn’t whether you need age verification. It’s whether what you have today will survive an enforcement action.

The Dual Verification Problem

Creator platforms have a verification challenge that most industries don’t: you need to verify identity on both sides of the transaction.

Creator verification ensures that the person producing content is who they claim to be, meets minimum age requirements, and complies with record-keeping obligations like 18 USC § 2257 for adult content. This isn’t just an age check — it’s a full identity verification that must be periodically refreshed.

Subscriber verification ensures that consumers accessing age-restricted content actually meet the age threshold. This is where the regulatory pressure is most acute, because it’s the failure mode that generates headlines: a minor accessing content they shouldn’t.

Most platforms have historically focused on creator verification (for payment compliance and fraud prevention) while treating subscriber age gates as an afterthought — a date-of-birth field or a checkbox. That asymmetry is no longer defensible.

The Regulatory Landscape in 2026

US State Laws: The Patchwork Is Now a Quilt

Over 25 US states now mandate some form of age verification for accessing restricted content online. What started with Louisiana’s Act 440 targeting pornographic websites has expanded dramatically:

Texas — HB 1181 requires “reasonable age verification” for any platform that distributes content harmful to minors. The law was upheld after a Supreme Court ruling, and enforcement is active. Creator platforms with adult-adjacent content — even fitness, wellness, or art with nudity — are in scope.

Utah, Virginia, Arkansas, Montana — Similar laws with varying definitions of “harmful to minors” and different verification standards. Some accept third-party age verification services. Others require specific methods like government ID upload.

New York — Proposed legislation would mandate age verification for social media platforms broadly, which includes subscription-based content platforms with social features.

The operational nightmare: each state has slightly different requirements for what constitutes “reasonable” or “commercially available” age verification. A platform serving users across all 50 states needs a verification approach robust enough to satisfy the strictest standard.

18 USC § 2257: Record-Keeping for Adult Content

Any platform hosting “actual sexually explicit conduct” must comply with federal record-keeping requirements under 18 USC § 2257. This means:

- Maintaining records proving every creator depicted in explicit content is 18 or older

- Records must include legal name, date of birth, and any aliases

- Records must be available for inspection by the Attorney General

- Non-compliance carries criminal penalties — up to five years for a first offense

OnlyFans, Fansly, and similar platforms have entire compliance teams dedicated to § 2257. But smaller creator platforms — especially those that allow user-generated content and aren’t specifically positioned as “adult” — often discover they’re in scope only after receiving a legal notice.

EU Digital Services Act (DSA)

The DSA, fully enforceable since February 2024, requires platforms to:

- Implement age verification mechanisms for content restricted to certain age groups

- Conduct systemic risk assessments for platforms classified as Very Large Online Platforms (VLOPs) — those with 45 million+ monthly active EU users

- Provide “clear, easy-to-understand” information about content moderation practices

For creator platforms operating in the EU, the DSA creates a baseline obligation: if your platform hosts content that could be harmful to minors, you must have age verification in place. The specific method isn’t prescribed, but “self-declaration” alone won’t satisfy regulators. The European Commission has signaled that effective age verification requires at least one layer of technical verification beyond a user’s assertion.

UK Online Safety Act

Ofcom’s guidance under the Online Safety Act requires platforms to use “highly effective” age assurance for content that is harmful to children. Ofcom has published specific performance standards: a false positive rate (incorrectly accepting an underage user) below 0.1% is the benchmark.

Self-declaration doesn’t meet this standard. Neither do most CAPTCHA-style “are you over 18?” gates. Platforms need technical age verification — document-based, biometric estimation, or credential-based — that can demonstrate measurable accuracy.

How the Major Platforms Handle It Today

OnlyFans

OnlyFans has the most mature verification stack in the creator economy, driven by years of regulatory scrutiny and the platform’s proximity to adult content:

- Creator verification: Government-issued photo ID, live selfie with liveness detection (blinking, head movement), photo holding ID with handwritten date, proof of address for payouts

- Re-verification: All creators must re-verify every 12 months

- Subscriber verification: State-by-state implementation based on local law — some states require ID upload before accessing any content

- AI policy: Outright ban on deepfakes, mandatory disclosure of AI-generated content

- Vendor: Yoti and Ondato handle third-party verification

OnlyFans invested early because it had to. The platform nearly lost its banking relationships in 2021 over content moderation concerns, and has since positioned compliance as a competitive advantage.

Patreon

Patreon’s approach is more fragmented:

- Creator verification: Government ID + selfie matching via third-party service; alternative ID options for creators without government-issued documents

- Content categorization: Creators must self-classify content as “Safe for All Audiences” or “Adult/18+”

- Subscriber verification: Age verification is required for patrons accessing adult-marked content, but implementation varies by jurisdiction

- 2026 policy updates: New rules around AI content, minor safety protections, and stricter enforcement of adult content guidelines

Patreon’s challenge is its breadth. The platform hosts everything from podcasters to adult creators to educators. A one-size-fits-all age verification approach doesn’t work when your content spans the full spectrum.

Substack

Substack’s age verification rollout has been rocky:

- Mandatory verification: In late 2025, Substack began requiring government ID verification for all creators

- Enforcement: Creators who didn’t comply were locked out of their accounts — in some cases, losing access to their subscriber lists and revenue

- Geographic variation: Australian users were blocked entirely until they completed verification

- Backlash: The approach was widely criticized for poor communication and heavy-handed enforcement

Substack’s stumble illustrates a critical lesson: how you implement age verification matters as much as whether you implement it. Locking creators out of their livelihoods without clear communication or a reasonable compliance window is a retention killer.

Emerging Platforms (Whop, Passes)

Newer platforms like Whop and Passes are building age verification into their architecture from day one — a significant advantage. Rather than retrofitting compliance onto a platform designed without it, these platforms can:

- Integrate verification at account creation

- Design content access controls around verification status

- Build privacy-preserving verification into the user flow rather than bolting it on

The competitive dynamic is shifting: age verification compliance is becoming a platform selection criterion for professional creators. Creators who’ve been burned by sudden policy changes on established platforms are choosing platforms with clear, predictable compliance frameworks.

What a Defensible Verification Stack Looks Like

Based on current regulatory requirements across the US, EU, and UK, here’s what a creator platform needs:

For Creator Verification

Document verification — Automated extraction and validation of government-issued IDs. This means checking the document against known templates, verifying security features, extracting data (name, date of birth, document number), and cross-referencing against fraud databases. A selfie with a handwritten date on a piece of paper is not document verification — it’s theater.

Liveness detection — Server-side liveness detection that confirms the person presenting the ID is physically present and not using a photo, video replay, or deepfake. Client-side liveness (checking for basic motion on the user’s device) is better than nothing but is increasingly insufficient against AI-generated attacks. Server-side analysis provides cryptographic proof of liveness that holds up under audit.

Face matching — Biometric comparison between the selfie/liveness capture and the photo on the government ID. This closes the gap between “someone uploaded a valid ID” and “the person on the ID is the one creating the account.”

Periodic re-verification — One-time verification isn’t enough. Creator accounts are sold, shared, and compromised. Annual or semi-annual re-verification — ideally triggered by risk signals (new device, location change, content type shift) — maintains the integrity of the verification over time.

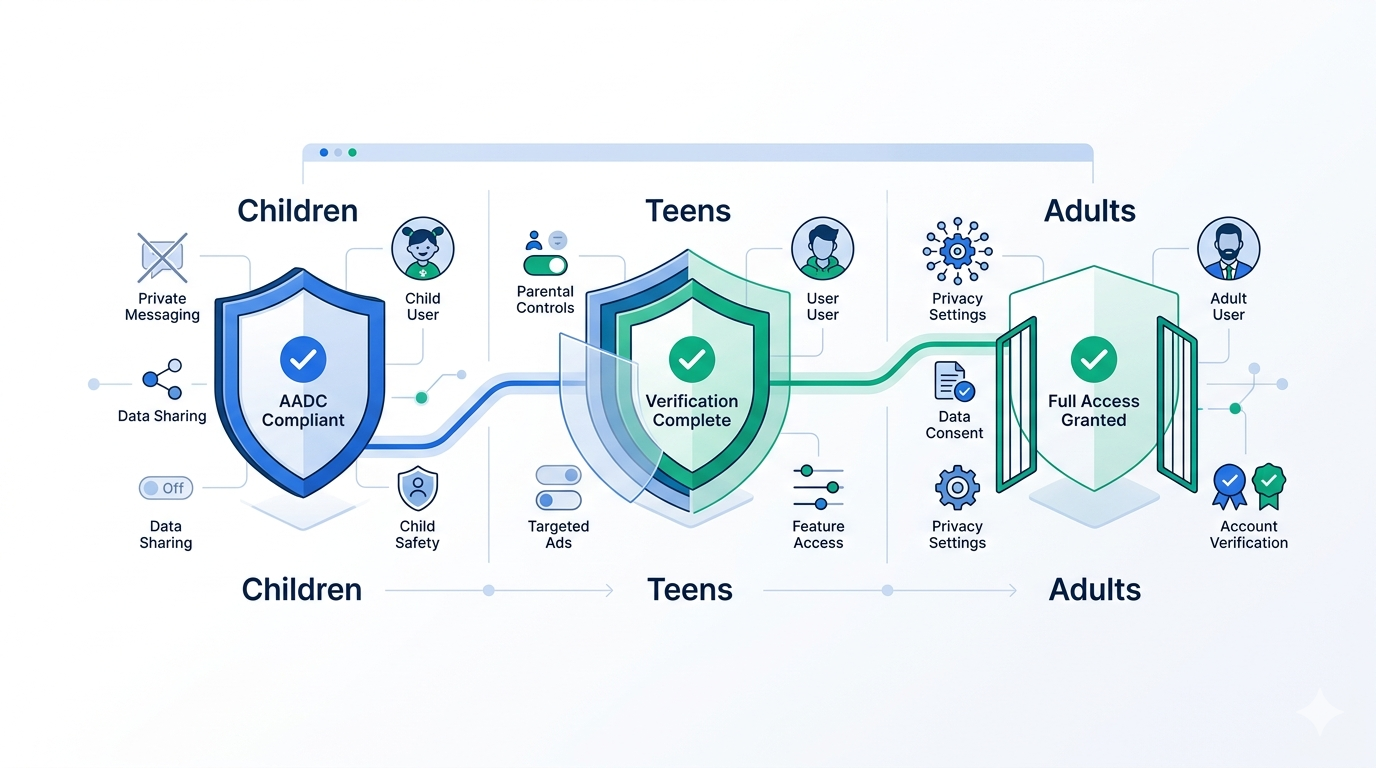

For Subscriber Verification

Age threshold classification — Not every platform needs full identity verification for subscribers. In many cases, what’s required is a binary age check: is this person over 18 (or 13, or 21, depending on the content and jurisdiction)? The verification should return a yes/no answer without collecting or storing unnecessary personal data.

Token-based returning users — Once a subscriber’s age is verified, they shouldn’t need to re-verify every session. Issuing a cryptographic token that confirms age verification status — without containing the underlying personal data — provides a smooth user experience while maintaining compliance. This is the “verify once, prove everywhere” model.

Privacy-preserving architecture — Subscribers are rightly concerned about uploading government IDs to access content. The verification flow should minimize data collection, process biometric data on-device where possible, and delete source materials (ID images, selfies) promptly after verification. Zero-knowledge approaches — proving “this user is over 18” without revealing their date of birth, name, or ID number — are the gold standard.

The Conversion Question

The most common objection from platform operators: “Age verification will kill our conversion rates.”

The data doesn’t support this — when verification is implemented well. OnlyFans processes millions of age verifications and has grown revenue year over year. Discord rolled out mandatory age verification globally and maintained its user base. The platforms that see conversion drops are those with bad verification flows: slow processing times, unclear instructions, frequent false rejections, and intrusive data collection.

What actually kills conversion:

- Verification that takes more than 60 seconds — Users abandon flows that feel like applying for a mortgage. Sub-3-second verification is achievable with modern document scanning and liveness detection.

- Requiring information that feels unnecessary — Asking for a Social Security Number to access a cooking tutorial is going to raise red flags. Match the verification intensity to the content risk.

- No fallback for edge cases — Not everyone has a driver’s license. Not every ID document photographs well. Platforms need multiple verification pathways and clear support escalation for failed attempts.

- Opaque data practices — Users who don’t understand what happens to their ID image after verification will hesitate. Transparency about data handling, retention periods, and deletion practices directly impacts willingness to verify.

Where Xident Fits

Xident provides the verification infrastructure that creator platforms need without building it from scratch:

For creator verification: Document OCR, NFC chip reading for passports and national IDs, server-side liveness detection, and face matching — the full stack needed for § 2257 compliance, DSA requirements, and platform trust and safety.

For subscriber age checks: Age threshold classification (+12, +15, +18, +21, +25) that returns a simple yes/no without over-collecting personal data. Client-side liveness detection for lightweight checks, server-side for high-risk scenarios.

For returning users: Token-based verification that lets subscribers prove their age status across sessions — and eventually across platforms — without re-verifying every time.

For privacy: On-device processing where possible, prompt deletion of source materials, and architecture designed around data minimization. No surveillance infrastructure. No persistent biometric databases.

For developers: A single API integration that handles the regulatory complexity. You call the endpoint, specify the age threshold and jurisdiction, and get back a verification result. Xident handles the document validation, liveness detection, face matching, and compliance logic. Your engineering team ships the integration in days, not quarters.

The creator economy is too large and too visible to avoid age verification compliance. The platforms that build robust, privacy-respecting verification into their user experience — rather than bolting on a checkbox and hoping for the best — will be the ones that survive the regulatory wave. And their creators will thank them for it.

Building a creator platform that needs age verification? Start with Xident’s API — integrate document verification, liveness detection, and age classification in under 5 minutes. Or talk to our team about enterprise deployment for platforms with custom compliance requirements.