Age-Appropriate Design Codes are no longer a UK-only concern. Five US states — California, Maryland, Nebraska, Vermont, and South Carolina — have enacted AADC-style laws, and at least a dozen more have active bills. South Carolina’s law took operational effect on March 1, 2026, with mandatory annual third-party audits starting July 1.

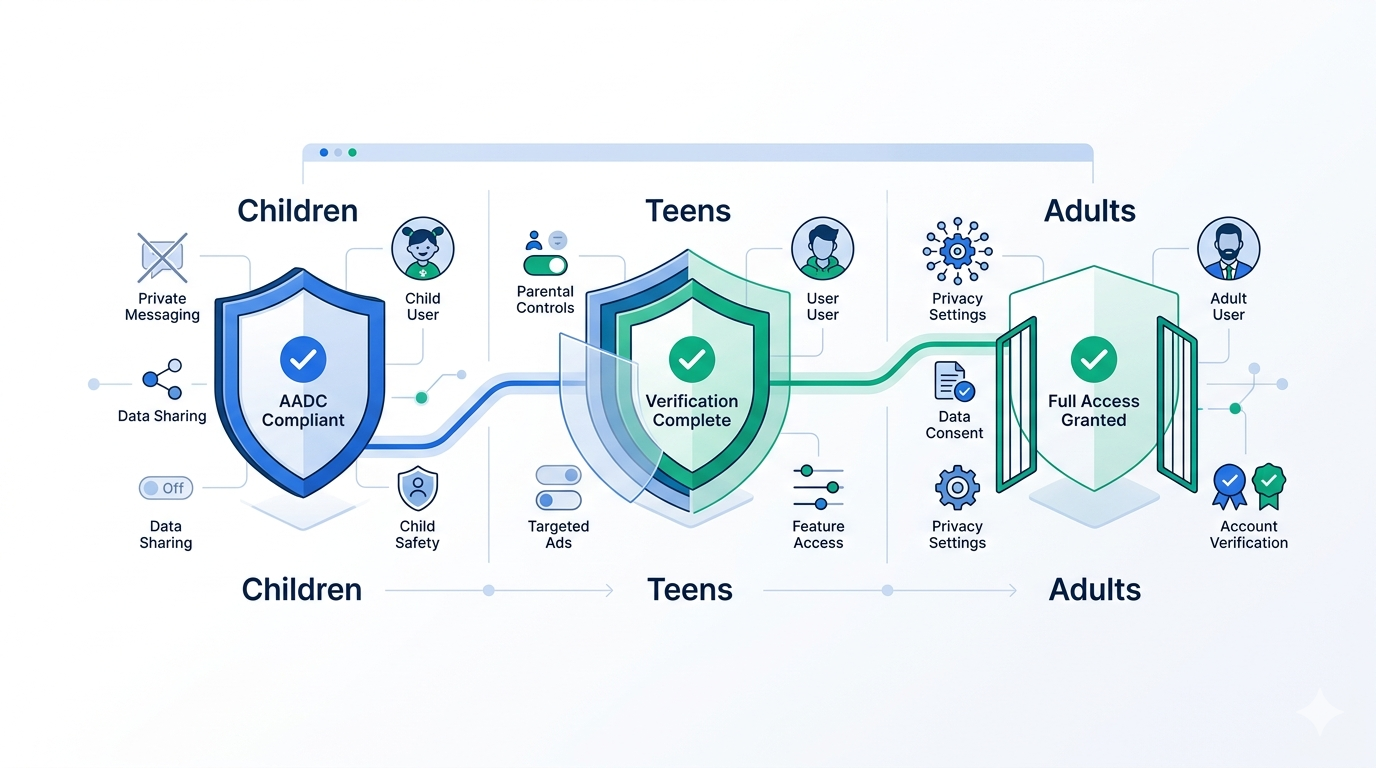

For platform operators, AADC laws represent a fundamentally different compliance challenge from the age verification mandates you may already be handling. Standard age gates answer a binary question: is this user old enough to access the platform? AADC laws go further. They require you to know a user’s age bracket and then design the product experience around it. That means age verification isn’t just an access checkpoint — it becomes a persistent signal that shapes feature exposure, data collection, and UI behavior across the entire product surface.

This post covers what AADC laws actually require, how they change your architecture, what the enforcement timeline looks like, and how to build a compliance layer that doesn’t require rebuilding your product from scratch.

What Age-Appropriate Design Codes Actually Require

The UK’s Children’s Code, enforced by the ICO since September 2021, was the first AADC. California’s Age-Appropriate Design Code Act (AB 2273) followed in 2022 and survived a facial legal challenge in 2024 when the Ninth Circuit reversed a preliminary injunction. The pattern has since spread to Maryland, Nebraska, Vermont, and South Carolina.

While each state’s law has local variations, the core requirements converge on five obligations that directly affect how platforms handle users likely to be minors.

1. Data Protection Impact Assessments (DPIAs)

Before launching any feature, product, or service that children are “reasonably likely to access,” you must complete a DPIA. This isn’t a checkbox exercise. The assessments must evaluate whether the feature could harm children through data collection, algorithmic amplification, or design patterns that exploit developmental vulnerabilities. South Carolina’s law explicitly requires these assessments to be made available to regulators on request.

The practical implication: your product development pipeline now needs a compliance gate before shipping features. If you serve a general audience — which most platforms do — the default assumption is that children will access the service, and the burden is on you to demonstrate otherwise.

2. High Privacy by Default

AADC laws mandate that privacy settings for minors default to the highest available level. That means no opt-in tracking, no public-by-default profiles, no algorithmic recommendations based on behavioral profiling — unless the platform has affirmatively determined the user is an adult.

This creates a direct engineering dependency on age signals. Without reliable age determination, you face a choice: apply maximum privacy restrictions to all users (which degrades the adult experience and likely hurts engagement metrics), or implement age assurance so you can differentiate.

3. Prohibition of Dark Patterns

All five US AADC laws prohibit the use of dark patterns — manipulative design tactics that nudge minors toward sharing more data, making purchases, or spending more time on-platform. The definitions are broad. Auto-play, infinite scroll, gamification mechanics, visible like counts, push notifications, and in-app purchase flows all fall under scrutiny.

This doesn’t mean you must remove these features entirely. It means you must disable or restrict them for users identified as minors. Which, again, requires knowing who your minor users are.

4. Restrictions on Profiling and Geolocation

Platforms cannot profile minors for commercial purposes or collect precise geolocation data unless it’s strictly necessary for the core service. South Carolina’s law goes further, requiring that platforms not use minors’ personal information in ways that are “reasonably likely to cause substantial harm.”

5. Transparency Reports and Third-Party Audits

South Carolina’s AADC mandates annual third-party audits with public reporting. California requires DPIAs to be filed with the Attorney General. The trend is clear: self-attestation is insufficient. Regulators want verifiable, audited evidence of compliance.

Why AADC Changes Your Architecture (Not Just Your Policy)

Most age verification implementations follow a gate model: check age at registration or first access, then treat the user uniformly after that. AADC compliance breaks this model in three ways.

Age Signals Must Be Persistent, Not Point-in-Time

A one-time age check at sign-up isn’t sufficient. AADC compliance requires the platform to continuously apply age-appropriate defaults. If a user ages from 12 to 13 to 16, the applicable restrictions change. If a minor’s account was created with parental consent, the consent scope must be re-evaluated as the child matures. Your user model needs a durable age attribute — not just a boolean is_adult flag, but an age bracket that can inform feature-level decisions.

Feature Flags Must Be Age-Aware

Every feature governed by AADC requirements — algorithmic feeds, push notifications, direct messaging, in-app purchases, location sharing, profile visibility — needs conditional logic tied to the user’s age bracket. This isn’t a single if/else at the edge. It’s a cross-cutting concern that touches your recommendation engine, notification service, payment flow, privacy controls, and content moderation pipeline.

The cleanest implementation pattern is an age-aware feature flag system: define age brackets (e.g., under-13, 13–15, 16–17, 18+), map each feature to its applicable restrictions per bracket, and evaluate flags at runtime based on the user’s verified age signal. This keeps compliance logic centralized rather than scattered across dozens of service boundaries.

Audit Trails Must Prove Compliance

With mandatory third-party audits, you need to demonstrate — not just claim — that you applied the correct restrictions to the correct users at the correct time. That means logging when age was determined, what method was used, what confidence level was achieved, what restrictions were applied, and whether any overrides occurred. Your age verification provider should supply structured, auditable verification events that you can store and produce on demand.

The Enforcement Timeline

Here’s where things stand as of April 2026:

California (AB 2273): Survived legal challenge. The Ninth Circuit reversed the preliminary injunction in August 2024, and the case was remanded. Enforcement by the California Attorney General is active, with DPIAs required for covered businesses.

Maryland (HB 603): Enacted in 2024. Requires platforms to configure default privacy and safety settings to protect minors. Enforcement authority rests with the Attorney General.

Nebraska (LB 1092): Part of a broader online safety package. Operational requirements in effect.

Vermont (H.121): Enacted in 2024 with broad AADC-style provisions targeting platforms serving Vermont minors.

South Carolina (S.120): Signed into law with immediate effect. Operational requirements began March 1, 2026. Annual third-party audits must be completed and published by July 1 each year, starting 2026.

Federal activity: The KIDS Act, currently advancing through House committee, incorporates AADC-style requirements at the federal level. If enacted, it would establish a national floor, potentially preempting the state patchwork — but that outcome is uncertain, and platforms shouldn’t wait for it.

Building AADC Compliance Without Rebuilding Your Product

The practical question for most teams is: how do I retrofit AADC compliance into an existing platform without rewriting every service? Here’s the approach that minimizes blast radius while meeting the requirements.

Step 1: Implement Tiered Age Assurance

You need age signals with enough granularity to distinguish between under-13, 13–15, 16–17, and 18+ users. A binary adult/minor check won’t satisfy AADC’s graduated requirements.

Xident’s verification flows return age bracket classifications — not just a pass/fail — which map directly to AADC compliance tiers. A single API call returns whether a user falls into +12, +15, +18, +21, or +25 brackets, giving your feature flag system the signal it needs without over-collecting personal data.

Step 2: Centralize Age-Based Feature Controls

Build (or extend) a feature flag service that accepts age bracket as an input parameter. Map each AADC-regulated feature to its applicable restrictions per age tier. This gives you a single configuration surface for compliance policy rather than scattered conditionals across your codebase.

Example mapping:

| Feature | Under 13 | 13–15 | 16–17 | 18+ |

|---|---|---|---|---|

| Algorithmic feed | Off | Opt-in only | Opt-in only | Default on |

| Push notifications | Off | Limited | Standard | Standard |

| Direct messaging | Contacts only | Contacts only | Open with safety | Open |

| In-app purchases | Blocked | Parental consent | Allowed with limits | Allowed |

| Profile visibility | Private | Private | Private by default | User choice |

| Location sharing | Off | Off | Opt-in only | User choice |

| Visible like counts | Hidden | Hidden | User choice | User choice |

Step 3: Generate Auditable Compliance Records

For each user session, log the age determination event (method, timestamp, confidence score), the feature restrictions applied, and any changes to those restrictions over time. Xident’s verification events include structured metadata — verification method, confidence level, and result timestamps — that map directly to audit requirements. Store these records in an append-only audit log with retention aligned to your applicable statute of limitations (typically 3–5 years for state AG enforcement).

Step 4: Complete DPIAs for Existing Features

Audit your current feature set against AADC requirements. Any feature involving data collection, algorithmic personalization, social interaction, or monetization needs a documented assessment of its impact on minors. Prioritize features with the highest risk exposure: recommendation algorithms, messaging systems, and purchase flows.

Step 5: Establish a Review Gate for New Features

Integrate AADC impact assessment into your product development workflow. Before any feature ships, it should pass through a compliance review that evaluates its behavior across all age brackets. This is most effective as a checklist in your existing PR or RFC process — not a separate bureaucratic layer.

Common Mistakes to Avoid

Treating AADC as a legal problem, not an engineering problem. The requirements are structural. They affect data models, feature flags, privacy defaults, and audit infrastructure. Legal can define the policy; engineering has to implement it.

Relying on self-declared age. Every AADC law requires “reasonable” age determination. A date-of-birth field that a 12-year-old can trivially lie on doesn’t meet the standard. Regulators have explicitly called out self-declaration as insufficient.

Applying adult restrictions to everyone. Some platforms attempt compliance by restricting all users to the most restrictive tier. This technically satisfies the law but destroys the product experience for adults. It’s also not sustainable — advertisers and engagement metrics will force a rollback, which creates compliance risk.

Ignoring the audit requirement. South Carolina’s mandatory third-party audit isn’t optional, and other states are likely to follow. If you can’t produce evidence that age-appropriate restrictions were applied correctly, the audit will flag you regardless of your actual compliance posture.

The Bottom Line

Age-Appropriate Design Codes are transforming age verification from a single access gate into a persistent product design constraint. The platforms that will handle this transition most efficiently are those that treat age signals as a first-class attribute in their user model — not an afterthought bolted onto the registration flow.

If your current age verification solution only returns a binary yes/no, you’re already behind. AADC compliance requires graduated age brackets, persistent signals, and auditable verification events. That’s exactly what Xident was built to provide.

The compliance window is closing. South Carolina’s audit deadline is July 1. California’s AG is actively enforcing. And the federal KIDS Act could establish a national standard within 12 months. The time to build the infrastructure is now — before the first enforcement action lands on your platform.

Need to implement AADC-compliant age verification? Get started with Xident — tiered age brackets, privacy-preserving architecture, and audit-ready verification events out of the box.