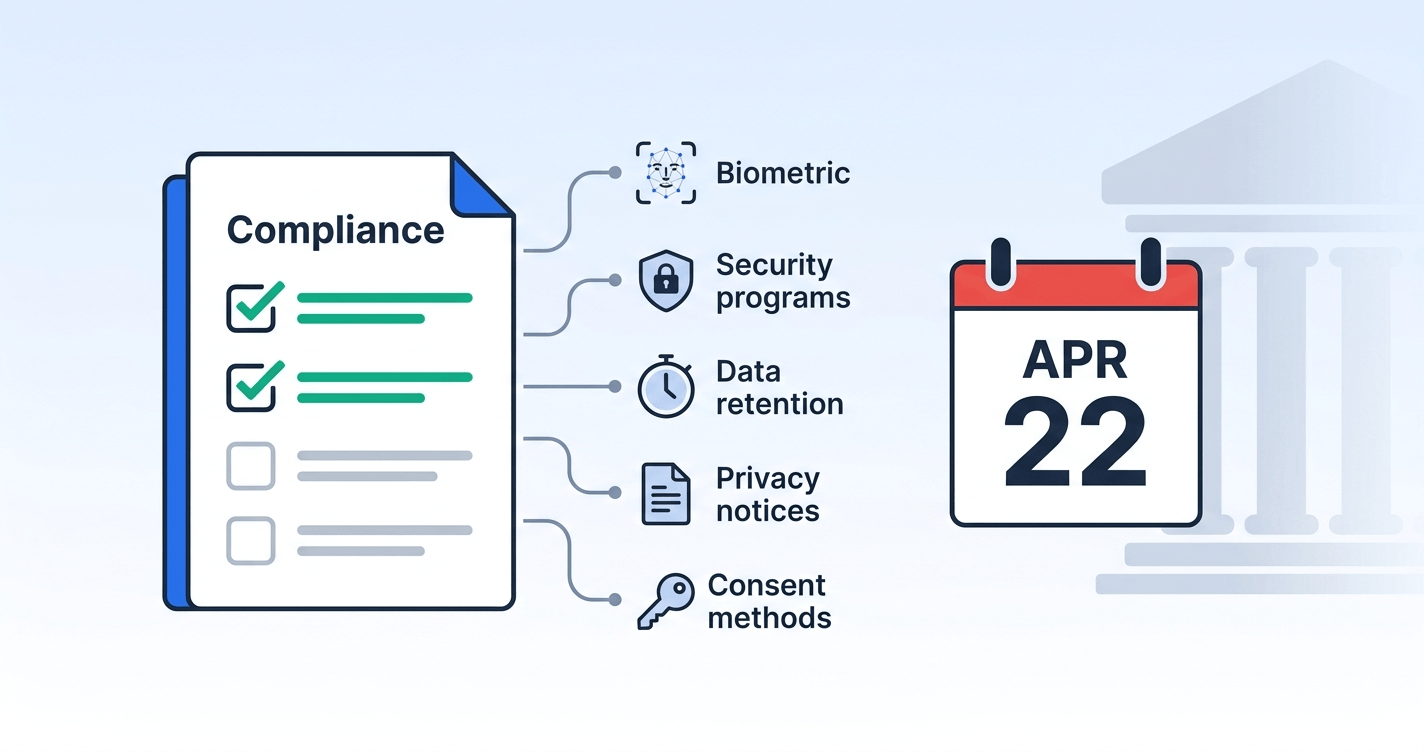

The compliance deadline for the FTC’s amended COPPA Rule is April 22, 2026 — nine days from today. If your platform collects data from users who might be under 13, or if you operate a service directed at children, the changes taking effect are not cosmetic. They expand what counts as personal information, introduce mandatory written security programs, impose real data retention limits, and add new consent verification mechanisms.

This isn’t the February 2026 policy statement about age verification safe harbors (we covered that separately). These are structural amendments to the COPPA Rule itself — the first major update since 2013 — and they carry enforcement consequences.

Here’s what changed, what it means for your architecture, and what you need to do before the 22nd.

What the Amended Rule Actually Changes

The FTC finalized these amendments in April 2025 (effective June 23, 2025) with a one-year compliance window that closes on April 22, 2026. The changes fall into five categories that matter for platform engineering and compliance teams.

1. Expanded Definition of Personal Information

This is the change with the broadest blast radius. The amended rule adds the following to COPPA’s definition of “personal information”:

- Biometric identifiers: face templates, fingerprints, retina scans, voiceprints, and any biometric data that can identify a specific individual. If your age verification flow captures a selfie and extracts facial geometry — even temporarily — that’s now explicitly personal information under COPPA.

- Government-issued identifiers: Social Security numbers were already covered, but the amendment clarifies that any government-issued identifier (state IDs, passport numbers) falls under the definition.

- Specific geolocation data: Precise location data that can identify a street name and city. Coarse geolocation (city-level from IP) may still be exempt, but if your SDK collects GPS coordinates from a child’s device, you need consent.

- Audio recordings containing a child’s voice: Not just voice recognition data — the recording itself is now personal information if it contains a child’s voice.

What this means for your stack: Audit every data collection point. If your verification pipeline touches biometric data, geolocation, or audio from a user who might be under 13, every one of those data points now triggers COPPA’s full consent and notice requirements. This is particularly relevant for platforms using face-based age estimation — even privacy-preserving approaches need to account for the moment between capture and on-device processing.

2. Mandatory Written Information Security Program

For the first time, COPPA now requires operators to maintain a formal, documented information security program for children’s data. This isn’t a suggestion — it’s a rule requirement with specific elements:

- Designated coordinator: Someone must be explicitly responsible for the program. This can’t be an unnamed team; the rule expects an identified individual or role.

- Risk identification: You must conduct and document risk assessments specific to children’s personal information — not just general security assessments.

- Protective safeguards: Technical and organizational controls must be documented, implemented, and appropriate to the sensitivity of the data.

- Regular evaluation: Safeguard effectiveness must be evaluated on an ongoing basis, not just at implementation.

- Annual updates: The program must be updated at least annually to reflect emerging threats and risk-management practices.

What this means for your stack: If you’ve been relying on your general SOC 2 or ISO 27001 program to cover children’s data, that may not be sufficient. The COPPA security program must specifically address children’s personal information as a distinct data category. You need a documented program that names a responsible party, identifies risks specific to children’s data, and shows evidence of annual review.

3. Written Data Retention and Deletion Policies

The amended rule requires operators to adopt written data retention policies that specify:

- What personal information is collected from children

- The business purpose for each category of data

- A defined timeframe for deletion

The critical language: personal information from a child may not be retained indefinitely. The FTC declined to set a specific retention period, but the expectation is clear — you need a defensible retention schedule with actual dates, not vague language about keeping data “as long as necessary.”

What this means for your stack: Map every data store that could contain children’s personal information. For each, define a retention period and implement automated deletion. This includes backups, analytics pipelines, log aggregators, and any third-party service that receives children’s data. If your age verification vendor retains verification results or session data, their retention schedule becomes part of your compliance surface.

4. Enhanced Privacy Notice Requirements

Operators must now disclose:

- The identities and specific categories of all third parties receiving children’s personal information — not just generic descriptions like “analytics providers” or “service partners.”

- How audio files containing children’s voices are collected, used, and deleted.

- All sources of data collection, including third-party SDKs and embedded content.

What this means for your stack: Your privacy policy probably names categories of recipients. The amended rule wants names and categories. If you share children’s data with Firebase, Amplitude, Stripe, or any other specific service, those names need to appear in your COPPA notice. This creates an ongoing maintenance burden — every new vendor integration that touches children’s data requires a privacy notice update.

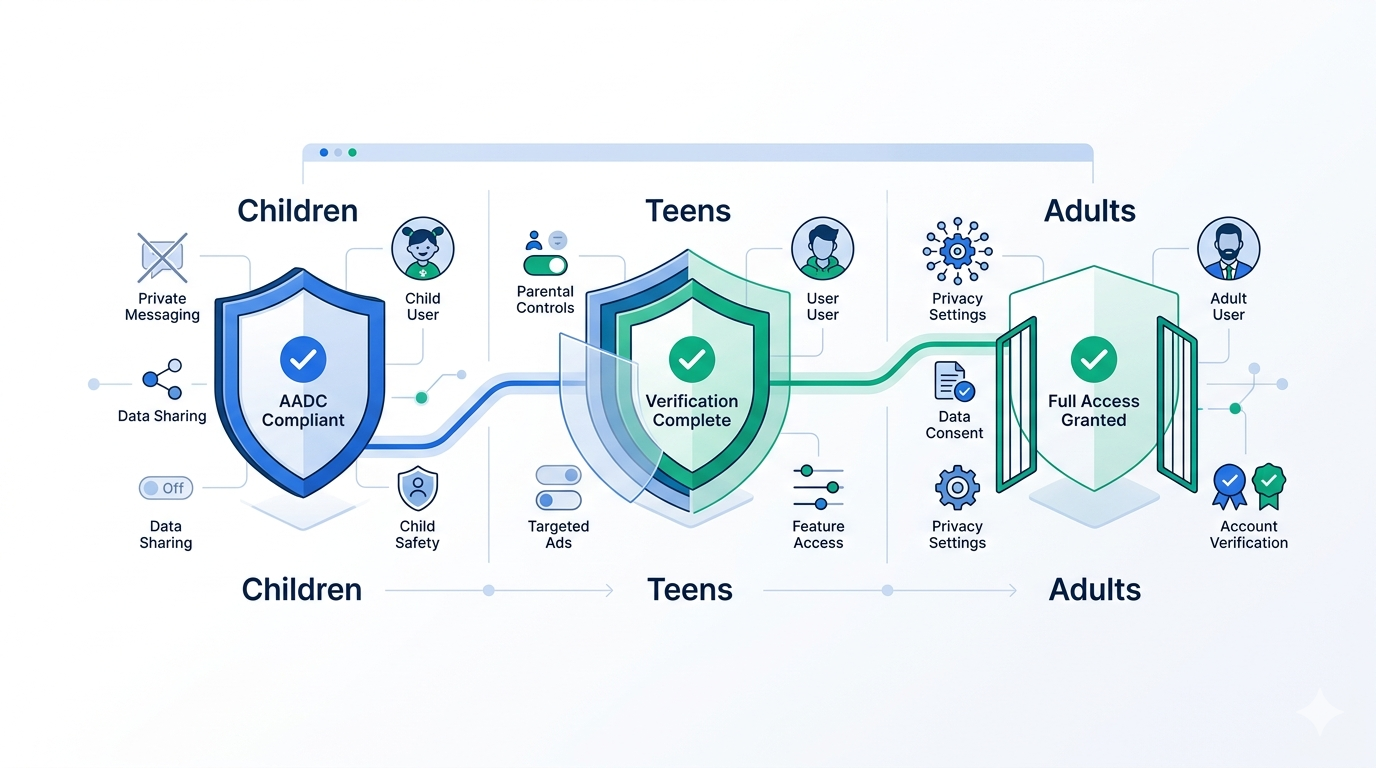

5. New Consent Verification Methods

The amended rule approves new methods for obtaining verifiable parental consent:

- Knowledge-based authentication: Multiple-choice questions drawn from data that a parent (but not the child) would know.

- Government ID matched against facial recognition: Parents can verify identity by submitting an ID and a live selfie for matching.

- Text message verification: SMS-based flows are now explicitly permitted.

These join existing methods (credit card transactions, signed consent forms, video verification). The FTC also retained the “email plus” method for lower-risk data collection scenarios.

What this means for your stack: If you’ve been limited to email-based consent flows, you now have more options — but each new method introduces its own data handling requirements. Government ID plus facial recognition for parental consent, ironically, creates new biometric data that itself falls under the expanded personal information definition. Make sure your consent verification pipeline doesn’t create a COPPA compliance problem while solving one.

The Ed-Tech Provision

The amendments include specific provisions for educational technology operators. Schools can consent on behalf of parents for educational purposes, but the amended rule tightens the conditions:

- Data collected under school consent can only be used for the authorized educational purpose.

- Operators must provide schools with access to and the ability to delete children’s data.

- Marketing or commercial use of data collected under school consent is prohibited.

If your platform serves both direct consumers and school/institutional customers, you likely need separate data handling pipelines — one operating under parental consent, one under school consent, with different retention and use restrictions.

A Compliance Checklist for the Next Nine Days

If you’re reading this and haven’t started, here’s a prioritized list of what to address before April 22.

Immediate (do this week):

-

Audit your personal information inventory against the expanded definition. Search for any collection of biometric data, government IDs, precise geolocation, or audio recordings from users who might be under 13.

-

Update your COPPA privacy notice with specific third-party names and categories. List every vendor, SDK, and analytics service that receives children’s data.

-

Draft your written data retention policy with explicit timeframes for each data category. Even if you plan to refine it, having a documented policy by April 22 is the minimum.

-

Designate a COPPA security program coordinator by name or role, and document this assignment.

Short-term (before April 22):

-

Document your information security program specific to children’s data. This can reference your existing security program but must explicitly address children’s personal information as a category with identified risks and controls.

-

Review third-party agreements to ensure vendors handling children’s data have appropriate retention and deletion provisions.

-

Implement or plan automated deletion for children’s personal information based on your defined retention periods.

Ongoing (post-deadline):

-

Schedule annual security program reviews and document the review process.

-

Build vendor update triggers so new integrations automatically prompt privacy notice updates.

-

Evaluate new consent methods (knowledge-based auth, SMS verification) if your current consent flow has high abandonment rates.

How Xident Helps

Xident’s age verification architecture is designed to minimize COPPA exposure, not increase it.

On-device processing: Our age estimation runs client-side using WebAssembly and ONNX Runtime. No biometric data — no facial geometry, no selfie images — leaves the user’s device or reaches our servers. This means the biometric data generated during age estimation doesn’t enter your data pipeline at all, significantly reducing your expanded personal information footprint under the amended rule.

No data retention by design: Xident returns an age threshold result (over/under a specified age), not raw biometric data. There’s nothing to retain and nothing to delete, which simplifies your data retention policy for the age verification component of your stack.

Token-based returning users: Once a user is verified, Xident issues a reusable cryptographic token. Returning users prove their age status without repeating the verification process, eliminating repeated biometric data collection.

Purpose-limited data flow: Because Xident only returns a pass/fail age threshold determination, there’s no personal information output that could be repurposed for marketing, analytics, or profiling — satisfying the purpose limitation requirements inherent in both the amended COPPA rule and the February 2026 safe harbor policy.

If your platform needs to determine whether users are under 13 — either to apply COPPA protections or to gate access to age-restricted features — Xident provides the answer without creating the compliance burden that traditional ID-upload or server-side biometric verification methods now carry under the amended rule.

The Bottom Line

The April 22 deadline isn’t optional, and the FTC has been signaling aggressive enforcement intent throughout 2026. The February policy statement encouraging age verification, combined with these structural rule amendments, creates a clear regulatory expectation: platforms should be verifying ages, and when they do, they need to handle the resulting data with documented, auditable rigor.

The good news is that the amended rule rewards privacy-preserving architectures. If your age verification approach minimizes data collection — processing biometrics on-device, returning only threshold results, avoiding persistent storage — you’ve already addressed the hardest compliance requirements before they become your problem.

The nine-day window is tight. Start with the audit, get the documentation in place, and build the automated systems over the following months. The FTC will likely look more favorably at operators who demonstrated good-faith compliance efforts by the deadline than at those who ignored it entirely.

Need to implement age verification that’s compliant by design? Get started with Xident in under five minutes, or contact our team for enterprise integration support.