Today — April 22, 2026 — marks the full compliance deadline for the FTC’s amended COPPA Rule. If your EdTech platform collects data from users who might be under 13, the grace period is over. The penalties for non-compliance are real, and the FTC has already signaled that education technology is a priority enforcement sector.

This isn’t a theoretical future concern. It’s the regulatory reality right now.

What Changed in the Amended COPPA Rule

The FTC published its amended Children’s Online Privacy Protection Rule with an effective date of June 23, 2025, and gave companies until April 22, 2026, to reach full compliance. The changes are substantial — not incremental updates to existing requirements, but structural shifts in how platforms must handle children’s data.

Expanded Definition of Personal Information

The amended rule expands “personal information” to explicitly include biometric data — facial recognition templates, voiceprints, fingerprints, and any other biometric identifier. For EdTech platforms using facial recognition for proctoring, attention monitoring, or identity confirmation, this is a direct hit. Any biometric collection from a user under 13 now falls squarely under COPPA’s consent requirements.

Stricter Consent Verification

Self-declaration — asking users to enter a birthdate or check a box confirming they’re over 13 — no longer satisfies COPPA’s “verifiable parental consent” standard. The FTC has made clear that platforms must use methods that provide a higher degree of confidence. Acceptable methods now include knowledge-based authentication, video conferencing with trained personnel, government ID verification, and facial age estimation matched against a parent’s verified identity.

Data Retention Limits

Platforms can no longer retain children’s data indefinitely. The amended rule requires that personal information be kept “for only as long as is reasonably necessary to fulfill the specific purpose(s) for which the information was collected.” For EdTech companies that built data lakes of student interaction data for analytics, recommendation engines, or AI model training, this creates an immediate obligation to audit and purge.

Third-Party Disclosure Restrictions

Sharing children’s data with third-party analytics, advertising, or enrichment services now requires separate, explicit parental consent — even if the parent already consented to the primary data collection. The days of bundling third-party data sharing into a general consent flow are over.

Why EdTech Is the FTC’s Priority Target

The FTC isn’t treating education technology as just another sector that happens to serve children. It’s treating EdTech as a sector with uniquely high exposure to COPPA violations, for three reasons.

Scale of minor exposure. K-12 platforms routinely serve millions of users who are definitionally under 13. Unlike social media platforms where minors are a subset of the user base, many EdTech products are designed exclusively for children. The entire user population is in scope.

School consent complexity. COPPA allows schools to act as agents for parental consent — but only for educational purposes. When an EdTech vendor collects data that serves commercial purposes (analytics for product improvement, feature usage tracking, A/B testing), the school consent exception doesn’t apply. This distinction has been poorly understood and widely violated across the industry.

Biometric data collection. The shift to remote learning accelerated the adoption of biometric technologies in EdTech — facial recognition for proctoring, voice analysis for language learning, eye tracking for engagement measurement. These technologies are now explicitly covered by COPPA, and many platforms haven’t updated their consent mechanisms to reflect it.

In February 2026, the FTC issued guidance specifically addressing EdTech companies, warning that “the school authorization exception is not a blanket permission to collect whatever data a vendor wants.” The message is unambiguous: EdTech companies need robust age verification and consent mechanisms, and the FTC will enforce against those that don’t have them.

The FERPA–COPPA Intersection

EdTech platforms operating in US K-12 environments face a dual compliance challenge. FERPA (the Family Educational Rights and Privacy Act) governs education records held by schools and their authorized agents. COPPA governs the online collection of children’s personal information by commercial operators.

These aren’t redundant protections — they create overlapping but distinct obligations:

FERPA requires schools to maintain control over education records. When an EdTech vendor acts as a “school official” under FERPA, it can access student data without direct parental consent — but only for legitimate educational purposes, and only under the school’s direct control.

COPPA requires the commercial operator (the EdTech company) to obtain verifiable parental consent before collecting personal information from children under 13. The school can provide this consent on behalf of parents, but only for the specific educational purpose stated in the vendor agreement.

The practical implication: an EdTech platform cannot rely on a school’s FERPA authorization to satisfy COPPA requirements for data collection that exceeds the educational purpose. If your platform collects behavioral data for product analytics, serves personalized content recommendations, or uses student data to train machine learning models, those activities likely fall outside the school consent exception — and require direct parental consent with proper age verification.

What Online Learning Platforms Need to Implement

Whether you’re building a K-12 learning management system, an online tutoring marketplace, a language learning app, or an AI-powered homework assistant, the requirements converge on the same set of capabilities.

Age Gate at Registration

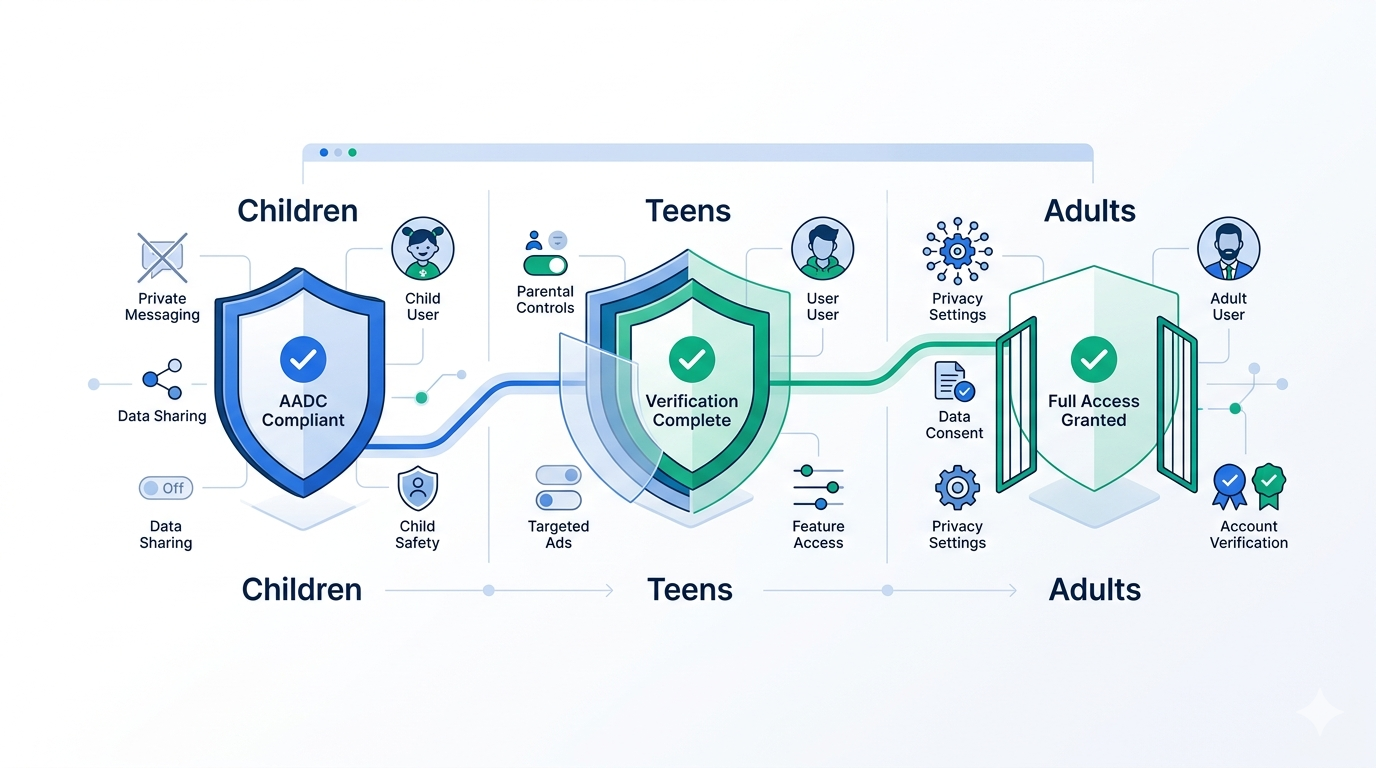

Every new user must be age-verified before any data collection occurs. This doesn’t mean asking for a birthdate — it means using a verification method that provides reasonable confidence about the user’s age. For platforms serving mixed-age audiences, this is the first decision point: is this user a child, an adult student, or a parent/teacher?

Parental Consent Flow

If the user is under 13 (or under the applicable age threshold in their jurisdiction — 16 in many EU countries under GDPR), the platform must obtain verifiable parental consent before proceeding. This requires verifying that the consenting adult is actually the child’s parent or guardian, not just someone with access to the parent’s email.

School Consent Mechanism

For platforms that primarily serve schools, you need a documented consent flow where authorized school administrators can provide consent on behalf of parents — with clear limitations on what data collection that consent covers. The consent must be specific, documented, and revocable.

Ongoing Age Re-Verification

A user who registered as a 12-year-old is now a 13-year-old. A parent who consented for their child last year may have revoked access. Age verification isn’t a one-time event — it needs to be periodic and responsive to changes in status.

Data Minimization Architecture

The amended COPPA rule’s data retention limits require platforms to build with data minimization in mind. Collect only what’s necessary for the stated educational purpose. Delete it when it’s no longer needed. Don’t repurpose it without fresh, specific consent.

How Xident Solves This

Xident’s age verification API is built for exactly this kind of multi-stakeholder, compliance-heavy environment.

Age threshold classification without data exposure. Xident verifies whether a user meets a specific age threshold (+12, +13, +16, +18) without collecting, storing, or transmitting their date of birth, government ID images, or biometric templates to your servers. The verification happens on-device using privacy-preserving architecture, and your platform receives only a cryptographic yes/no token.

Sub-3-second verification. Students aren’t going to wait through a 30-second identity verification flow to start their math homework. Xident’s on-device ML models deliver age classification in under 3 seconds, with a 0.03% false pass rate — well within the “highly effective” standard required by UK Ofcom, German KJM, and French ARCOM regulations.

Parental consent verification. Xident supports verifiable parental consent flows where the parent’s identity and relationship to the child are confirmed before consent is granted. This satisfies both COPPA’s “verifiable parental consent” standard and GDPR Article 8’s requirements for parental authorization.

Token-based returning users. Once a student is verified, Xident issues a reusable credential token. The student doesn’t need to re-verify every session — the token proves their age status without repeating the verification process. This eliminates friction while maintaining compliance.

School administrator consent dashboard. For EdTech vendors working with school districts, Xident provides a consent management interface where authorized administrators can grant, monitor, and revoke consent for groups of students. Every consent action is logged for audit purposes.

Zero biometric data retention. Xident’s on-device processing means biometric data never leaves the user’s device. There are no facial templates to store, no voiceprints to protect, no biometric database to breach. This directly addresses the amended COPPA rule’s expanded definition of personal information.

The Global Compliance Picture

COPPA isn’t the only regulation EdTech platforms need to worry about. The global landscape is converging on stricter age verification requirements for education technology.

EU GDPR and the Digital Services Act. Under GDPR, platforms serving users under 16 (or the member state threshold, as low as 13 in some countries) must obtain parental consent. The Digital Services Act adds transparency requirements for platforms that serve minors, including age verification mandates.

UK Age Appropriate Design Code. The UK’s Children’s Code requires platforms “likely to be accessed by children” to implement age verification and apply default privacy settings for young users. EdTech platforms serving UK students are squarely in scope.

Canada’s privacy commissioners’ resolution. In 2025, Canada’s federal, provincial, and territorial privacy commissioners issued a joint resolution explicitly prohibiting EdTech vendors from using student personal information for secondary purposes — and requiring robust age verification to enforce these restrictions.

Australia’s eSafety enforcement. Australia’s age verification codes, effective March 2026, apply to any online service that could expose minors to harmful content. AI-powered EdTech tools that generate content — including homework assistants, writing aids, and tutoring chatbots — may fall within scope if they can produce restricted material.

The Cost of Getting This Wrong

The FTC can impose penalties of up to $53,088 per violation of COPPA. For a platform with millions of student users, each instance of unauthorized data collection from a child could constitute a separate violation. The math gets catastrophic fast.

But financial penalties aren’t the only risk. School districts are increasingly requiring EdTech vendors to demonstrate COPPA and FERPA compliance as a procurement condition. Certifications like 1EdTech’s TrustEd Apps seal and Student Privacy Pledge compliance are becoming table stakes for winning district contracts. If your platform can’t demonstrate robust age verification and consent management, you’re not just risking fines — you’re losing deals.

The reputational cost is perhaps the highest. Parents and school administrators trust EdTech platforms with their children’s data. A COPPA enforcement action doesn’t just produce a fine — it produces headlines that destroy that trust.

Getting Started

The compliance deadline isn’t coming — it’s here. If your EdTech platform doesn’t have proper age verification in place, every day of continued operation without it is a day of accumulated regulatory exposure.

Xident’s age verification API can be integrated in under 5 minutes with our JavaScript SDK, React components, or server-side API. You can start with our Starter plan at $9/month to validate the integration, then scale as your user base grows.

This post is for informational purposes and does not constitute legal advice. Consult qualified legal counsel for guidance on your specific compliance obligations.