In February 2026, security researcher Celeste and colleagues published findings that changed the age verification industry overnight. Persona, the identity verification vendor powering Discord’s UK age verification trial, had exposed its entire government dashboard codebase — 53 megabytes across 2,456 files — sitting unprotected on a FedRAMP-authorized government server. No exploit was needed. The files were simply public.

Within days, Discord cut ties with Persona and delayed its global age verification rollout to H2 2026. The EFF published a scathing analysis. Privacy advocates pointed out that users who consented to a simple age check had unknowingly submitted their data to a system that performs 269 distinct verification checks, screens individuals against terrorism watchlists, and can retain biometric data for up to three years.

The incident wasn’t just a security failure by one vendor. It was a structural indictment of the centralized identity verification model that still dominates the market — and a case study in why architecture decisions made during vendor selection carry consequences that no SLA or compliance certificate can fully mitigate.

What the Exposure Actually Revealed

The exposed codebase wasn’t a staging environment or a test instance. It was sitting on a .gov domain, which means it had cleared FedRAMP authorization — the US government’s security assessment framework for cloud services. The fact that a FedRAMP-authorized system could be this exposed should concern anyone who treats compliance certifications as proof of security.

Here’s what the files showed:

Scope creep beyond age verification. Persona’s system performs facial recognition against watchlists, screens “adverse media” across 14 categories including terrorism and espionage, and assigns risk scores. A platform that integrates Persona for age verification is, whether it realizes it or not, feeding user data into a surveillance apparatus whose scope far exceeds “is this person over 18.”

Long retention of biometric data. The system retains IP addresses, browser and device fingerprints, government ID numbers, phone numbers, names, faces, and biometric analytics — selfie pose detection, age-inconsistency flags, suspicious-entity scores — for up to three years. That’s three years of biometric data sitting in a centralized system for a verification event that should take seconds and then be forgotten.

Government data-sharing pathways. The presence of Persona’s code on a government server raised immediate questions about what data flows between the commercial verification service and federal agencies. Researchers at State of Surveillance documented that Persona files reports on users to federal agencies, a capability that was not disclosed to users who submitted their information for a Discord age check.

Discord’s statement that Persona “did not meet the bar” for on-device age estimation was revealing. It confirmed that Discord had been evaluating on-device processing as a requirement — and that Persona’s centralized, server-side approach was the architectural mismatch that ended the relationship, not just the security exposure.

The Centralized Verification Honeypot Problem

The Persona incident is a specific example of a general problem: centralized identity verification systems create honeypots by design.

When a platform integrates a centralized verification vendor, every user’s identity documents, selfies, and biometric data flow to the vendor’s servers. The vendor stores this data — sometimes for years — across all their clients. This means a single vendor breach exposes not just one platform’s users, but every platform that uses that vendor. Persona continues to provide verification for Roblox, ChatGPT, and Lime, among others. A breach of the underlying data store would be catastrophic across all of them.

The economics of centralized verification create a perverse incentive. The more data a vendor accumulates, the more valuable their dataset becomes — both for legitimate purposes like fraud model training and for illegitimate purposes if the data is compromised. The vendor’s incentive is to retain as much data as possible for as long as possible. The user’s interest is exactly the opposite.

This isn’t a theoretical concern. The identity verification industry has seen multiple significant breaches in recent years. AU10TIX, another major vendor, disclosed a breach in 2024 that exposed identity documents. The pattern is consistent: centralized data stores attract sophisticated attackers, and the blast radius of a successful attack scales with the size of the data store.

What Discord Got Right (Eventually)

Discord’s response to the incident deserves credit on one specific point: the company publicly committed to architectural requirements that would prevent a recurrence. Discord stated that any future verification partner must perform facial age estimation entirely on-device, with no biometric data transmitted to external servers.

This is the correct architectural position for age verification. Here’s why:

On-device processing eliminates the honeypot. If biometric data never leaves the user’s device, there is no centralized data store to breach. The vendor never has access to the raw data, which means a vendor compromise cannot expose user biometrics.

Minimal data transmission reduces scope creep. When the verification vendor receives only a binary age signal — “over 18: yes/no” — rather than raw identity documents, there is no opportunity for the vendor to screen users against watchlists, build biometric profiles, or share data with third parties. The data minimization is enforced by architecture, not by policy.

Device-level verification enables genuine consent. Users can understand and consent to a process that happens on their device and transmits only a yes/no result. They cannot meaningfully consent to a process where their selfie is transmitted to a server that performs 269 checks they were never told about.

Discord also committed to publishing documentation of every verification vendor and their practices, and to expanding verification options beyond facial age estimation to include credit card verification and other modalities. These are reasonable steps, but the architectural commitment to on-device processing is the one that actually changes the risk profile.

The Regulatory Tailwind Toward Privacy-First Architecture

The Persona incident didn’t happen in a regulatory vacuum. Multiple regulatory frameworks are now explicitly pushing toward the kind of privacy-first architecture that would have prevented this exposure.

The EU Age Verification Blueprint, announced on April 15, 2026, uses zero-knowledge proof cryptography to enable age verification without disclosing personal data. The blueprint is being piloted by France, Denmark, Greece, Italy, Spain, Cyprus, and Ireland, and can be integrated into the EU Digital Identity Wallet. Its design philosophy is explicit: prove the attribute (age), not the identity.

The UK’s Information Commissioner’s Office fined Reddit £14.5 million in early 2026 for relying on self-declaration and failing to protect children’s data. But the ICO’s guidance simultaneously emphasizes data minimization — verification systems should collect only what is strictly necessary for the age check and delete it immediately after.

Germany’s KJM already requires that age verification systems demonstrate technical data minimization. Persona’s three-year retention of biometric data would be non-compliant with KJM standards on its face.

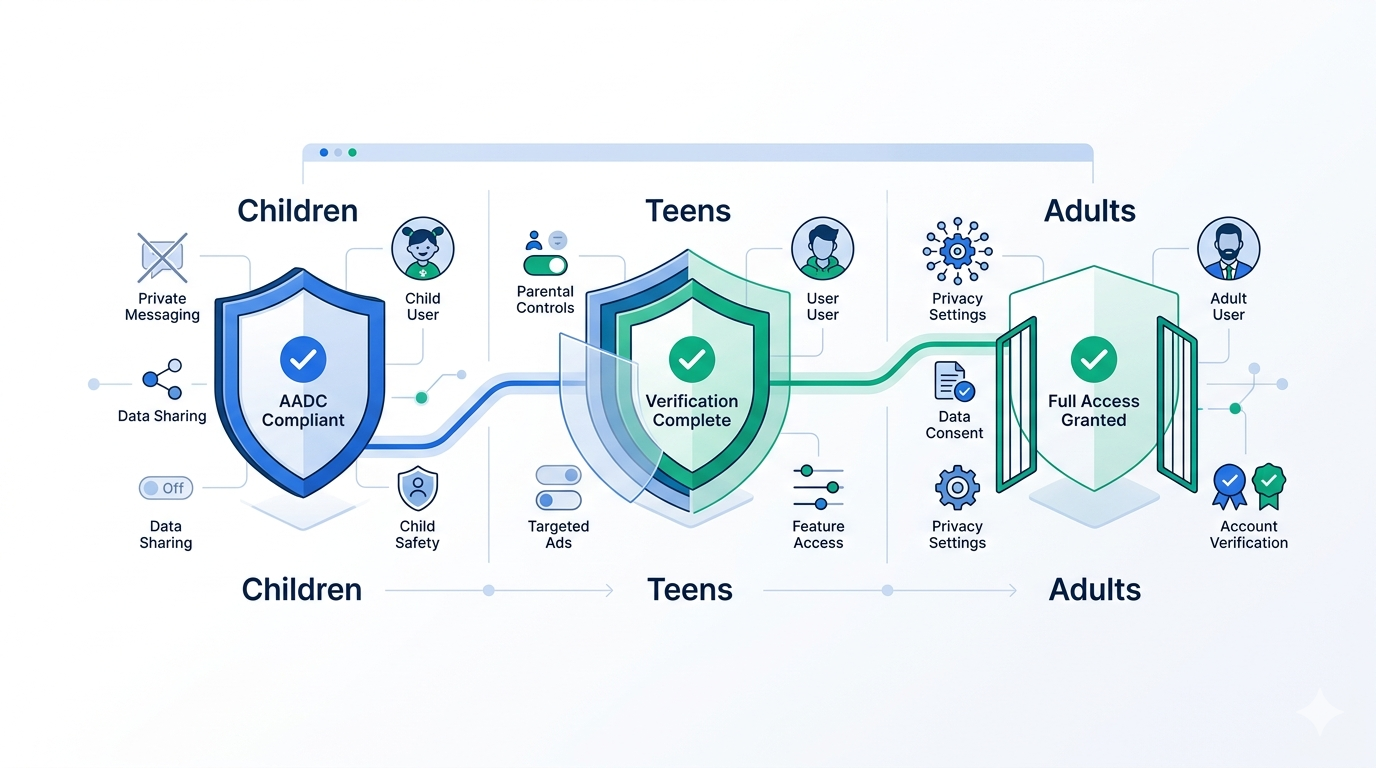

COPPA’s amended rules, effective April 22, 2026, require verifiable parental consent mechanisms that are both effective and privacy-preserving — a combination that centralized biometric databases structurally cannot deliver.

The regulatory direction is clear: age verification must work, but it must work without creating the kind of surveillance infrastructure that the Persona exposure revealed. Platforms that choose centralized verification vendors today are making a bet against the regulatory trajectory.

What to Look for in a Verification Vendor After Persona

The Persona incident should change how platforms evaluate verification vendors. Here are the architectural questions that should now be non-negotiable in any vendor assessment:

Where does biometric processing happen? If the answer is “on our servers,” the vendor is building the same honeypot that Persona built. On-device processing is the only architecture that eliminates centralized biometric data stores. Ask for a technical architecture diagram and verify that raw biometric data never leaves the user’s device.

What data does the vendor retain, and for how long? “As long as legally required” is not an acceptable answer — it’s a blank check. The vendor should retain only the minimum data needed for audit compliance (typically a verification event ID and result, not the biometric data itself), and retention periods should be measured in days or hours, not years.

What else does the vendor do with the data? Persona’s 269-check pipeline was invisible to platforms that integrated it for age verification. Ask vendors explicitly: does the system perform any processing beyond the stated verification purpose? Does the vendor share data with government agencies, law enforcement, or third parties? Is the vendor’s full processing scope documented in the user-facing privacy notice?

Is the vendor’s architecture auditable? Request third-party security audit reports, penetration test results, and architecture reviews. A FedRAMP authorization was not sufficient to prevent Persona’s exposure — certifications are a baseline, not a guarantee.

What happens if the vendor is breached? Understand the blast radius. If the vendor stores raw biometric data for multiple clients, a single breach exposes all of them. If the vendor processes on-device and transmits only verification results, a breach of the vendor’s systems cannot expose user biometrics because the vendor never had them.

How Xident’s Architecture Avoids the Centralized Trap

Xident was designed from the start around the principle that biometric data should never be centralized — not because centralization is inconvenient, but because it is structurally incompatible with privacy-preserving age verification.

Here’s how Xident’s architecture differs from the model the Persona incident exposed:

On-device liveness detection and age estimation. Xident runs liveness detection and facial age estimation models directly on the user’s device. The raw selfie data is processed locally and never transmitted to Xident’s servers. The server receives only the verification result and a cryptographic attestation of the on-device process.

No biometric data retention. Xident does not store selfies, facial embeddings, or biometric templates. The verification event produces a result token — a cryptographically signed assertion that a verification occurred, what the result was, and when it happened. The token is sufficient for audit compliance without retaining the underlying biometric data.

Scope-limited processing. Xident performs age verification. It does not screen users against watchlists, assign risk scores, cross-reference government databases, or perform any processing beyond the stated verification purpose. The system is architecturally incapable of scope creep because it never has access to the data that would enable it.

Reusable verification credentials. Once a user is verified, Xident issues a reusable credential that can be presented to other platforms without repeating the verification process. This reduces the number of times a user needs to submit to any verification process, further minimizing the aggregate exposure of personal data across the ecosystem.

This architecture means that even in a worst-case scenario — a complete compromise of Xident’s infrastructure — an attacker would find verification event logs with anonymized tokens. They would not find selfies, government IDs, biometric templates, or any of the data that made the Persona exposure dangerous.

The Architecture Decision Is the Security Decision

The Persona-Discord incident clarified something that the industry has debated for years: the most important security decision in age verification is the architectural decision about where data is processed and what data is retained.

Compliance certifications, penetration tests, and security questionnaires are necessary but not sufficient. They assess whether a system is implemented correctly, not whether the system’s architecture is fundamentally sound. Persona was FedRAMP-authorized. Its architecture was still a liability.

The platforms that will be best positioned — both for regulatory compliance and for user trust — are the ones that choose verification architectures where a vendor breach cannot expose user biometrics, because the vendor never had them in the first place.

That’s not a feature. It’s a design philosophy. And after February 2026, it’s no longer optional.