If you operate a gaming platform — whether iGaming, online gambling, or a free-to-play title with loot boxes and microtransactions — 2026 is the year regulators stopped treating “Are you 18+? Yes/No” as age verification. The checkbox era is over. Across Europe, Latin America, and the United States, new legislation now demands verifiable, auditable proof that your players meet minimum age thresholds before they can access gambling mechanics, purchase randomized content, or enter age-restricted environments.

The stakes are concrete: license revocation, seven-figure fines, app store removal, and reputational damage that no marketing budget can repair.

Here’s what’s changed, what you need to implement, and how to do it without destroying your onboarding funnel.

The Regulatory Pressure Is Coming from Every Direction

Europe: PEGI Overhauls Age Ratings for Monetization Mechanics

The Pan European Game Information (PEGI) system — which governs age ratings across 38 countries — announced its most significant overhaul in over a decade, effective June 2026. The new guidelines specifically target monetization mechanics that mimic gambling patterns:

- Paid random items (loot boxes, card packs, gacha mechanics): automatic PEGI 16 rating

- Time-limited microtransactions (flash sales, battle pass urgency): PEGI 12 minimum

- Mechanics incentivizing daily play (daily login rewards tied to purchases): PEGI 7 minimum

This isn’t cosmetic. A PEGI 16 rating for a game previously rated PEGI 7 or PEGI 12 means platform-level age gates on the Google Play Store, Apple App Store, and console storefronts. If your game triggers a PEGI 16 rating due to loot box mechanics, the storefront is now obligated to enforce age restrictions at download or purchase — and they will pass that compliance burden downstream to you.

Germany’s Bundesrat has gone further, pushing for games with paid randomized content to receive full gambling classification (18+), which would subject them to the Interstate Treaty on Gambling and the associated identity verification requirements under GlüStV 2021.

Brazil: Loot Box Ban for Minors Takes Effect

Brazil’s online child-safety law, signed in late 2025, bans the sale of loot boxes and randomized paid content to minors (under 18) starting March 2026. This isn’t a recommendation — it’s enforceable law with penalties. Platforms operating in Brazil must now implement age verification before allowing any randomized purchase mechanic.

For studios with significant LATAM player bases, this is a compliance cliff. If you’re selling card packs, cosmetic loot boxes, or gacha pulls in Brazil, you need age-gated purchase flows that go beyond self-declaration.

United States: State-Level Patchwork Expands to Gaming

The US regulatory picture is, predictably, fragmented. But the direction is clear:

FTC enforcement — The Federal Trade Commission has already taken action against gaming companies for deceptive loot box practices, requiring age verification, odds disclosure, and parental controls as part of consent decree settlements. These aren’t theoretical risks; they’re precedent.

State legislation — Multiple states are advancing bills that explicitly classify certain in-game monetization mechanics as gambling when targeted at minors. If your platform operates in states like California, New York, or Illinois — where consumer protection enforcement is aggressive — you need to assume that age verification for purchase mechanics is a near-term requirement, not a distant possibility.

COPPA implications — The FTC’s February 2026 policy statement on COPPA safe harbor creates a narrow safe harbor for platforms that implement age verification technologies. If you collect any data from users who might be under 13, having robust age verification in place is now a defensive compliance strategy, not just a feature.

Australia: Chance-Based Purchases Rated 15+

Australia’s Classification Board now rates games with chance-based purchases (including loot boxes) at a minimum of MA15+. Combined with Australia’s broader online age verification trials under the eSafety Commissioner, gaming platforms targeting Australian users face dual compliance requirements: content classification and identity verification.

The Real Problem: 6% of Minors Are Already Gambling

According to the UK Gambling Commission, approximately 6% of 11-17-year-olds reported spending money on age-restricted gambling in the past year — despite it being illegal. Roblox, which has over 70 million daily active users (a significant portion under 18), has already deployed facial age estimation for certain features.

This isn’t a niche compliance issue. It’s a systemic failure of existing age gates, and regulators are responding accordingly. The platforms that fail to implement real verification will be the ones made into examples.

What “Real” Age Verification Looks Like for Gaming

Self-declaration checkboxes and date-of-birth fields are not age verification. They’re liability theater. Here’s what regulators and platform holders now expect:

Tier 1: Identity Document Verification

The gold standard for iGaming and online gambling. The user submits a government-issued ID (passport, driver’s license, national ID card), which is validated for authenticity using optical character recognition (OCR), document template matching, and anti-tampering detection.

When to use it: Real-money gambling, high-stakes iGaming, any platform where regulatory license conditions require identity verification (not just age verification).

Trade-off: Highest assurance, but highest friction. Expect 15-30% drop-off if implemented as a hard gate at signup. Smart platforms defer full ID verification to the first deposit or withdrawal rather than at account creation.

Tier 2: Biometric Age Estimation

AI-powered facial analysis estimates a user’s age from a selfie or camera feed. No document required — the system returns a confidence-scored age estimate. Platforms like Roblox already use this approach.

When to use it: Loot box purchase gates, social features in gaming platforms, any context where you need to distinguish “clearly under 16” from “likely over 18” without full identity verification.

Trade-off: Lower friction than document verification, but lower assurance. Suitable for PEGI-tier compliance but insufficient for gambling license requirements. Works best as a first gate, with document verification triggered for edge cases.

Tier 3: Database Cross-Referencing

Age verification via cross-referencing user-provided data (name, date of birth, address) against authoritative databases — credit bureaus, voter rolls, telco records. No selfie, no document upload.

When to use it: Markets where database coverage is high (US, UK, Australia), users who prefer minimal friction, and as a complement to biometric or document methods.

Trade-off: Fast and low-friction, but relies on database coverage. Doesn’t work for users with thin credit files (which skews toward younger users — the exact population you’re trying to gate).

Tier 4: Device-Level Signals

Emerging approach where the operating system or device provides a privacy-preserving age signal to the application. Apple and Google are both building toward this model. Colorado is considering legislation that would mandate age verification at the OS level.

When to use it: As a supplementary signal. If the device confirms the user is 18+, you can reduce friction in your own verification flow. But device-level signals are not yet universally available, and you can’t rely on them as your sole verification method.

Integration Architecture: Where Age Verification Fits in the Gaming Stack

The key architectural decision is where in the user journey you enforce age verification. Get this wrong and you’ll tank conversion. Get it right and you’ll see minimal impact on your funnel while achieving compliance.

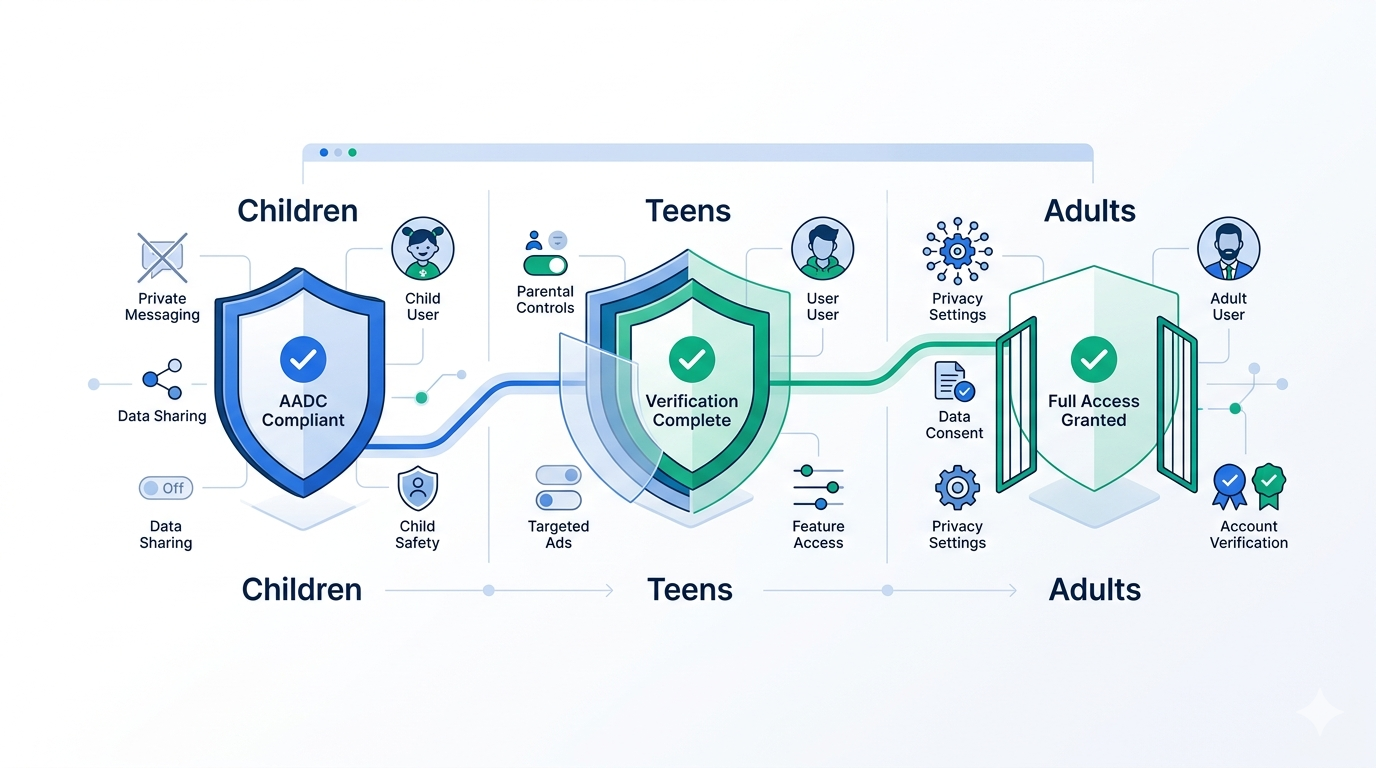

Recommended Flow for Free-to-Play with Monetization

Account Creation → Lightweight age gate (DoB + device signal)

↓

First Login → Biometric age estimation (if DoB indicates near-threshold)

↓

First Purchase → Full identity verification (for real-money or randomized items)

↓

Returning User → Token-based re-authentication (no re-verification)This progressive verification approach matches the regulatory requirement to the risk level of the action. A user browsing free content doesn’t need the same verification as one purchasing loot boxes with real currency.

Recommended Flow for iGaming / Online Gambling

Account Creation → Full identity verification (document + liveness)

↓

First Deposit → KYC cross-check + source of funds (if required by license)

↓

Returning Session → Token-based authentication

↓

Withdrawal → Re-verification if threshold exceededFor regulated gambling, there’s no progressive approach — you verify at the door. The goal is to make that verification as fast and frictionless as possible.

Token-Based Returning Users

The single biggest UX win in age verification is not re-verifying returning users. Once a player has been verified, issue a cryptographic token that persists across sessions. The token proves “this user has been age-verified” without requiring the user to re-submit documents or selfies.

Xident’s token system works exactly this way: verify once, return seamlessly. The token is cryptographically bound to the user’s device and session, preventing token sharing or replay attacks.

Conversion Impact: The Numbers You Need

Gaming platforms are understandably concerned about conversion impact. Here’s what the data shows:

Document verification at signup drops conversion by 15-30%, depending on the market and demographic. Younger users (18-24) have higher drop-off rates because they’re less likely to have documents readily available.

Biometric age estimation drops conversion by 3-8%. It’s fast (under 5 seconds), requires no document, and most users are already comfortable with camera-based interactions from social media.

Progressive verification (lightweight gate first, full verification at purchase) shows less than 2% incremental drop-off at the purchase step, because users who reach the purchase intent threshold are already committed.

Token-based returning users show zero incremental friction — they pass through seamlessly.

The conversion math is clear: the cost of verification friction is far lower than the cost of regulatory non-compliance, which includes fines, license suspension, and app store removal.

Implementation with Xident

Xident provides the full verification stack that gaming platforms need:

Age threshold classification — Not just “is this person 18?” but configurable thresholds (+12, +16, +18, +21, +25) that map directly to PEGI ratings, ESRB ratings, and jurisdictional gambling ages.

Biometric age estimation — On-device ML-powered facial age analysis that returns a confidence-scored estimate in under 3 seconds. No server-side image storage. No PII retention.

Document verification with liveness — Full identity document validation with server-side liveness detection to prevent spoofing. Required for iGaming license compliance.

Token-based returning users — Verify once, return frictionlessly. Cryptographic tokens eliminate re-verification friction while maintaining compliance audit trails.

Multi-jurisdiction support — A single integration handles the regulatory patchwork across PEGI (Europe), ESRB (North America), Australia’s Classification Board, Brazil’s child-safety law, and US state-level requirements.

Quick Integration

import { Xident } from '@xident/sdk';

Xident.init({

apiKey: 'pk_live_your_key',

flow: 'gaming', // Optimized for gaming UX

ageThreshold: 16, // Match your PEGI/ESRB rating

methods: ['biometric', 'document'], // Fallback chain

onVerified: (result) => {

// result.ageGroup → '16+', '18+', '21+'

// result.token → store for returning sessions

// result.confidence → 0.0 to 1.0

unlockPurchaseFlow(result.token);

},

onRejected: (result) => {

// Handle underage or unverified users

restrictToFreeContent();

}

});The SDK handles the full flow — biometric estimation first, document verification fallback if needed, and token issuance on success. Your game code receives a simple verified/rejected callback.

What Happens If You Don’t Act

The enforcement timelines are concrete:

- June 2026: PEGI’s new monetization-aware ratings take effect. App stores enforce age gates.

- March 2026: Brazil’s loot box ban for minors is enforceable.

- Ongoing: FTC consent decrees set binding precedent for US gaming companies.

- 2026-2027: Germany’s push for gambling classification of loot boxes could trigger GlüStV compliance requirements.

- 2026: Australia’s eSafety age verification trials inform permanent legislation.

Waiting for “clarity” is no longer a strategy. The regulatory trajectory is one-directional, and the platforms that implement robust age verification now will have a structural advantage when enforcement accelerates.

Start Verifying

If you’re building or operating a gaming platform with age-restricted content, monetization mechanics, or real-money gambling, the compliance window is closing.

Get started with Xident — integrate once, comply everywhere. Our gaming-optimized verification flow is designed for the conversion sensitivity of gaming onboarding while meeting the strictest regulatory requirements in every major market.

Have questions about your specific compliance scenario? Talk to our team — we’ve helped gaming platforms across iGaming, mobile F2P, and console storefronts implement verification that regulators accept and players tolerate.